By WREMF Team · 2026-05-09 · 58 min read

Last reviewed: 2026-05-09 by Rohan Singh

Learn how large language model optimization services improve AI visibility, citations, and retrieval across ChatGPT, Gemini, Perplexity, and more.

Key Takeaways

- Large language model optimization services include both technical AI performance and brand visibility optimization, so teams must define which problem they need to solve before choosing a provider.

- SEO, AEO, GEO, and LLMO work together, but LLMO expands the goal from ranking pages to being retrieved, cited, summarized, and recommended by AI systems.

- Content-centric LLMO works when content adds unique value, reinforces entities, answers real buyer prompts, and gives AI systems credible sources to retrieve.

- Technical SEO is the access layer of LLM optimization because AI systems need crawlable, renderable, structured, and internally linked content before they can cite or recommend it.

- RAG thinking helps marketers understand that AI visibility depends on the quality, consistency, and retrievability of the source ecosystem.

- LLM visibility is measured by tracking prompts, citations, brand mentions, recommendations, competitors, source consistency, and AI traffic attribution across multiple platforms.

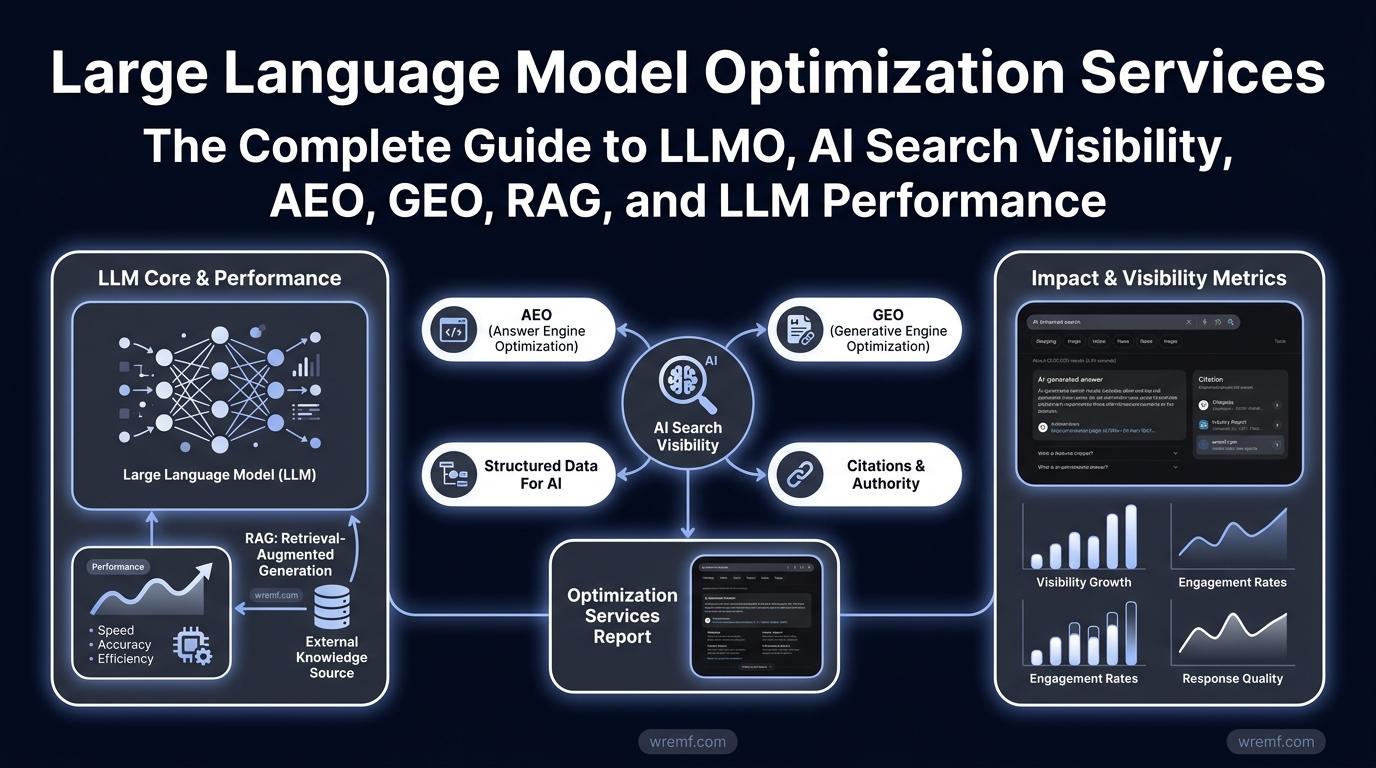

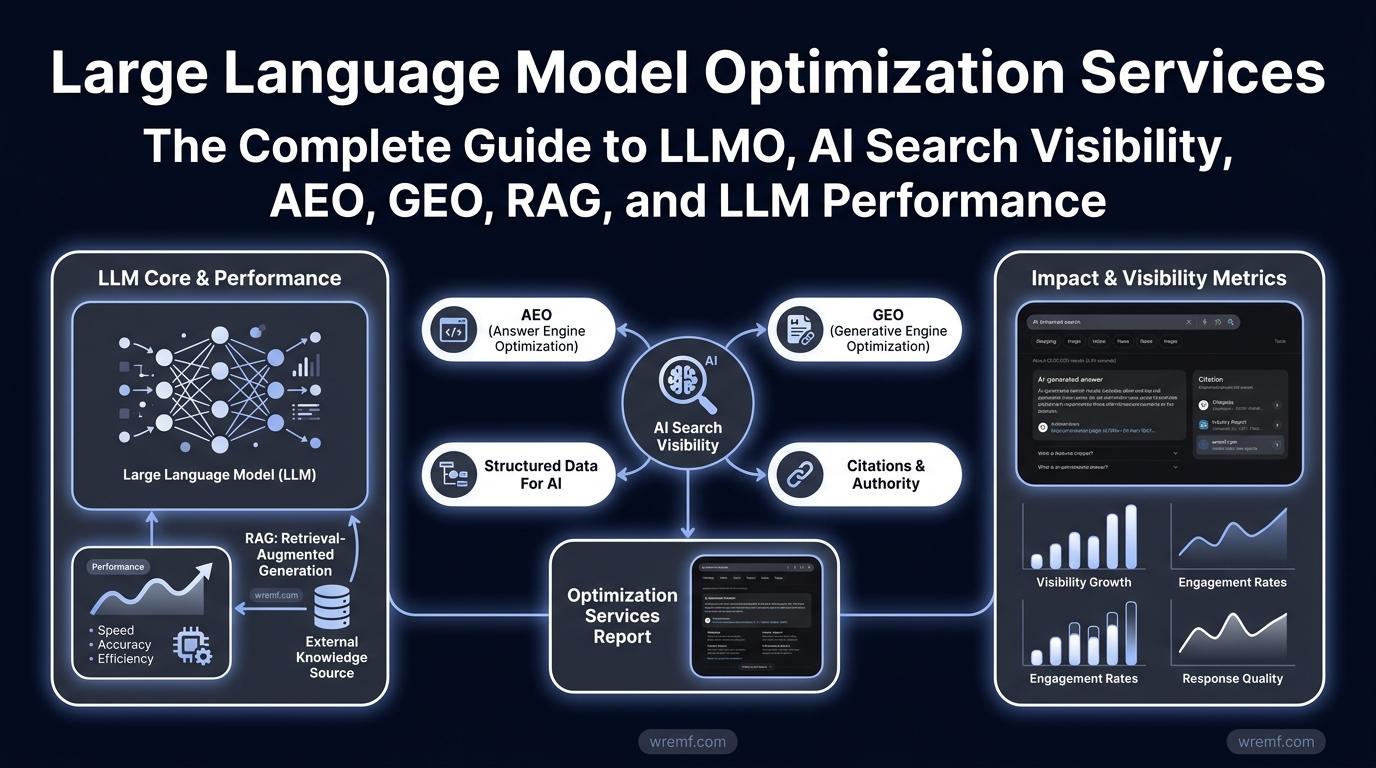

Large Language Model Optimization Services: The Complete Guide to LLMO, AI Search Visibility, AEO, GEO, RAG, and LLM Performance

Large language model optimization services improve how AI systems understand, retrieve, cite, recommend, and generate information about a brand. Gartner predicted that traditional search engine volume would drop 25% by 2026 as AI chatbots and virtual agents change search behavior. This guide explains technical LLM optimization, AI Search visibility, Answer Engine Optimization, Generative Engine Optimization, Retrieval-Augmented Generation, LLMOps, structured data, content strategy, citations, and reporting. It also shows how WREMF helps B2B teams track, improve, and prove visibility across ChatGPT, Claude, Gemini, Perplexity, Google AI Overviews, Copilot, DeepSeek, Grok, Meta AI, and Mistral. Use this guide to understand what LLMO services should include before choosing software, an agency, or a hybrid execution partner. (Gartner)

What Are Large Language Model Optimization Services?

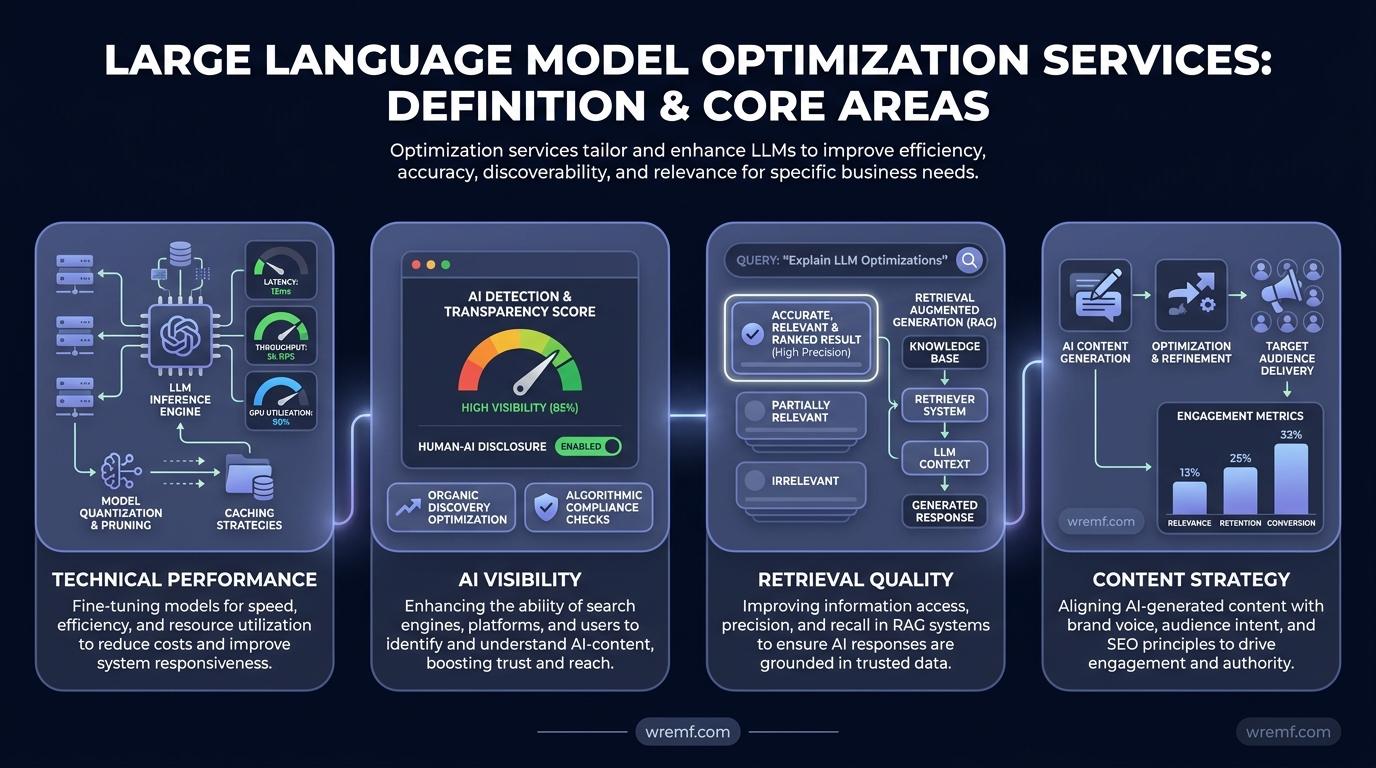

Large language model optimization services improve how large language models perform, retrieve information, and represent a brand in AI-generated answers. These services can focus on technical performance, AI visibility, retrieval quality, content strategy, or all four.

Large Language Model Optimization is the process of improving how language models generate, retrieve, evaluate, cite, and present information. It matters because large language models increasingly shape how buyers research problems, compare vendors, and decide which brands deserve attention.

The definition is tricky because LLM optimization has two meanings. In machine learning, LLM optimization can mean model fine-tuning, LLM inference, inference efficiency, distributed training, request batching, KV caching, speculative inference, Monitoring and evaluation, and large language model operations. In marketing, LLM optimization means improving LLM visibility, AI citations, brand mentions, source citations, source consistency, AI share of voice, and AI Discovery across AI-powered Search platforms.

For B2B teams, the practical question is not only whether a model can run faster or cheaper. The practical question is whether ChatGPT, Claude, Gemini, Perplexity, Google AI Overviews, Copilot, DeepSeek, Grok, Meta AI, Mistral, and other AI systems can correctly understand, retrieve, cite, and recommend your brand when buyers ask relevant questions.

WREMF treats large language model optimization services as a measurable workflow. The WREMF platform suite connects prompt tracking, source citations, competitor visibility, content briefs, GEO audits, AI traffic attribution, source consistency analysis, and reporting into one system for B2B teams.

| LLMO category | What it improves | Typical owner | Example metric |

|---|---|---|---|

| Technical LLM optimization | Model cost, latency, throughput, deployment reliability | CTO, AI engineer, ML team | Cost per response, latency, throughput |

| Retrieval optimization | Quality of sources used by AI systems | AI, data, search, product, support teams | Retrieval accuracy, failed retrieval rate |

| Content-centric LLMO | Brand visibility in AI answers | SEO, content, growth, marketing teams | AI citations, brand mentions, AI share of voice |

| Reporting optimization | Business proof and stakeholder confidence | Marketing, RevOps, agencies, leadership | AI traffic, visibility score, competitor gap |

Language Models are AI systems that process and generate natural language. Language Models matter because they now influence search behavior, content discovery, customer support, product research, and business decision-making.

AI systems are software systems that perform tasks using artificial intelligence techniques such as machine learning, natural language understanding, retrieval, and generation. AI systems matter because B2B buyers increasingly use them as research assistants before speaking to sales teams.

DID YOU KNOW: Google Search Central explains that AI features such as AI Overviews and AI Mode are part of Google Search, and site owners should focus on accessible, useful, people-first content rather than looking for a separate shortcut to inclusion. (Google Search Central) (Google for Developers)

KEY TAKEAWAY: Large language model optimization services now include both technical AI performance and brand visibility, so teams must define which problem they need to solve before choosing a provider.

The next step is understanding why LLMO is the natural extension of SEO, AEO, and GEO.

Why LLMO Is the Next Step After SEO, AEO, and GEO

LLMO is the next step after SEO because users are moving from ranked links to AI-generated answers. SEO helps pages rank, while LLM optimization helps brands get understood, cited, and recommended by large language models.

SEO is the practice of improving visibility in search engines. SEO matters because search engines still influence discovery, trust, traffic, and demand generation.

Answer Engine Optimization is the practice of structuring content so answer engines can extract clear, direct responses. Answer Engine Optimization matters because AI Overviews, featured snippets, voice assistants, and AI assistants reward concise, source-backed answers.

Generative Engine Optimization is the practice of improving how Generative AI systems cite, summarize, and recommend a brand. Generative Engine Optimization matters because AI-powered results often synthesize several sources instead of sending users to one ranked page.

AI Search is discovery through AI assistants, AI Overviews, conversational search, and AI-powered Search platforms. AI Search matters because buyers increasingly ask natural language questions instead of scanning a search engine results page.

The key difference between SEO and GEO is the output. SEO usually targets rankings, impressions, clicks, Core Web Vitals, technical SEO health, and organic conversions. GEO targets citations, brand placements, AI Overviews inclusion, source citations, source consistency, AI share of voice, and recommendation presence.

In real B2B buying journeys, a prospect may ask ChatGPT for a shortlist, use Perplexity to compare vendors, check Gemini responses for definitions, and then validate the final decision through Google Search. That journey means your content strategy must serve search engines, answer engines, and major LLMs together.

| Discipline | Main goal | What it measures | What it misses | Best use case |

|---|---|---|---|---|

| SEO | Rank in search engines | Rankings, clicks, impressions, Core Web Vitals | AI citations and AI answer inclusion | Organic traffic growth |

| AEO | Get extracted as an answer | Answer clarity, FAQ quality, structured content | Wider source ecosystem issues | Featured answers and AI snippets |

| GEO | Get cited in generative answers | Citations, mentions, AI Overviews, recommendations | Technical model cost and latency | AI Search visibility |

| LLMO | Improve model performance and brand visibility | Inference efficiency, prompt visibility, citations, retrieval quality | Traditional rankings alone | Full AI visibility and AI performance strategy |

Google says AI Overviews provide AI-generated snapshots with key information and links to dig deeper. OpenAI explains that ChatGPT Search responses may include inline citations and source panels when web search is used. These features show why source visibility is now part of search visibility, not a separate side issue. (Google AI Overviews, OpenAI ChatGPT Search) (Google Help)

KEY TAKEAWAY: SEO, AEO, GEO, and LLMO work together, but LLMO expands the goal from ranking pages to being retrieved, cited, summarized, and recommended by AI systems.

That distinction matters because LLM optimization has two major tracks: engineering performance and brand visibility.

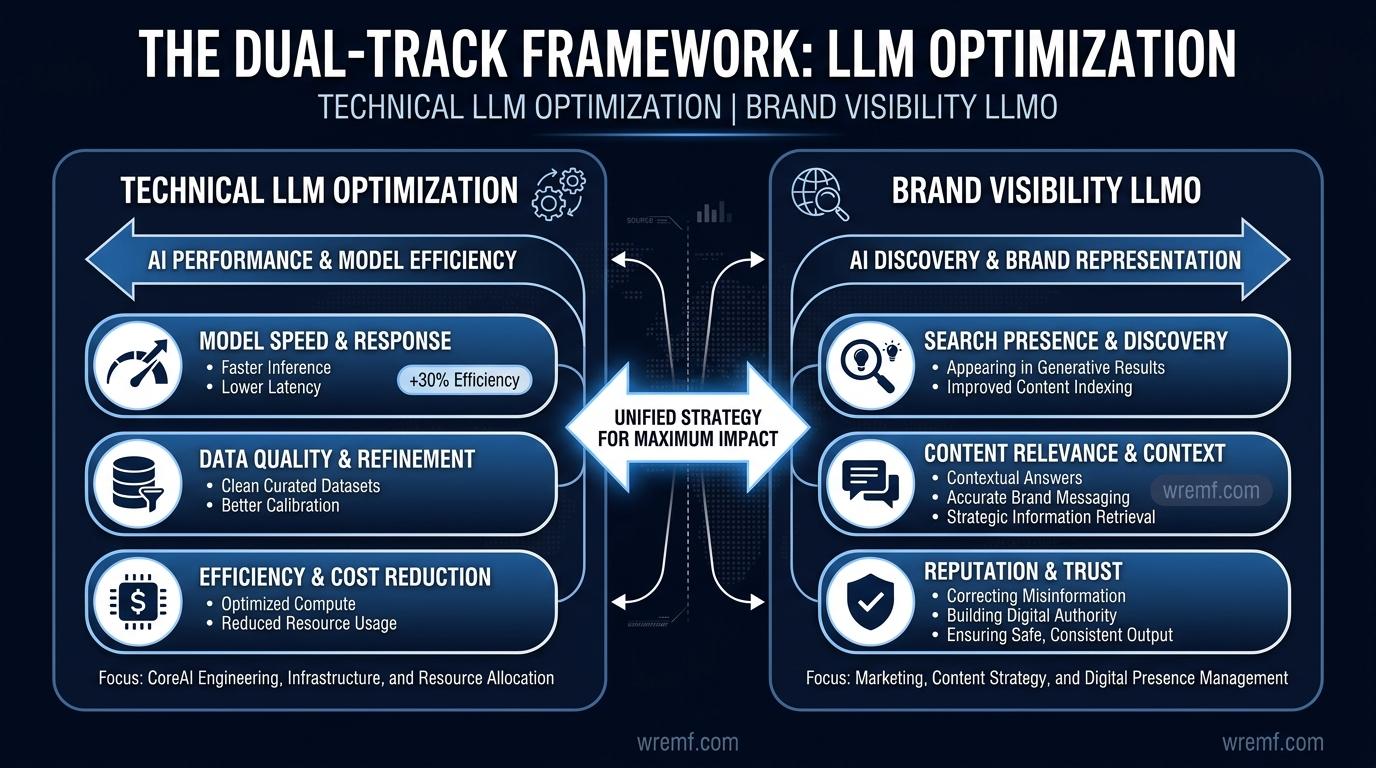

The Dual-Track Framework: Technical LLM Optimization vs Brand Visibility LLMO

LLM optimization has two tracks: technical optimization for AI models and visibility optimization for brands. The right service depends on whether you need better AI performance, better AI discovery, or both.

Technical LLM optimization improves how AI models run. This includes model fine-tuning, quantization, distillation, pruning, request batching, distributed training, model deployment, KV caching, speculative inference, LLM inference optimization, Security and compliance, Data management, and Monitoring and evaluation.

Brand-focused LLMO improves how AI systems describe, cite, and recommend a company. This includes content strategy, structured data, knowledge graph clarity, AI Overviews visibility, prompt tracking, source citations, digital PR, brand exposure, brand placements, reputation management, source consistency, and AI traffic attribution.

Model fine-tuning is the process of adapting a foundation model to a specific task, domain, language, tone, or dataset. Model fine-tuning matters when a company needs AI models to follow domain-specific rules, customer service policies, product terminology, or enterprise AI solutions.

LLM inference is the process of generating responses from an already trained model. LLM inference matters because latency, compute cost, response quality, and infrastructure capacity determine whether AI systems can scale.

Inference efficiency is the process of reducing compute cost, response latency, and resource usage during generation. Inference efficiency matters because high-volume conversational AI, customer support, eCommerce store assistants, and enterprise AI solutions can become expensive if each response requires too much compute.

AI visibility is the measurable presence of a brand inside AI-generated answers, citations, summaries, comparisons, and recommendations. AI visibility matters because buyers may trust an AI answer before visiting your website, reading your product descriptions, or contacting sales.

| Need | Best-fit service | Techniques | Main metric |

|---|---|---|---|

| Lower AI application cost | Technical LLM optimization | Quantization, pruning, batching, KV caching | Cost per response |

| Faster AI responses | Inference optimization | Decode phases optimization, speculative inference, request batching | Latency and throughput |

| Better internal answers | RAG optimization | Vector embeddings, Knowledge Graph Integration, reranking | Retrieval accuracy |

| Better AI brand visibility | Content-centric LLMO | AEO, GEO, structured data, source consistency | AI citations and mentions |

| Better executive reporting | AI visibility platform | Prompt tracking, attribution, competitor monitoring | Visibility score and AI share of voice |

NVIDIA explains that KV cache optimization can reduce expensive recomputation during LLM inference, which is a technical performance issue rather than a marketing visibility issue. This distinction helps teams avoid buying the wrong kind of LLM optimization service. (NVIDIA AI) (Anthropic)

IMPORTANT: A common buying mistake is hiring a technical LLM consultant when the real problem is AI Search visibility, or hiring SEO services when the real problem is model deployment and infrastructure cost.

KEY TAKEAWAY: Large language model optimization services should be evaluated by track: engineering performance, retrieval quality, brand visibility, or reporting.

Once the track is clear, you need to understand how large language models actually use content, sources, and retrieval.

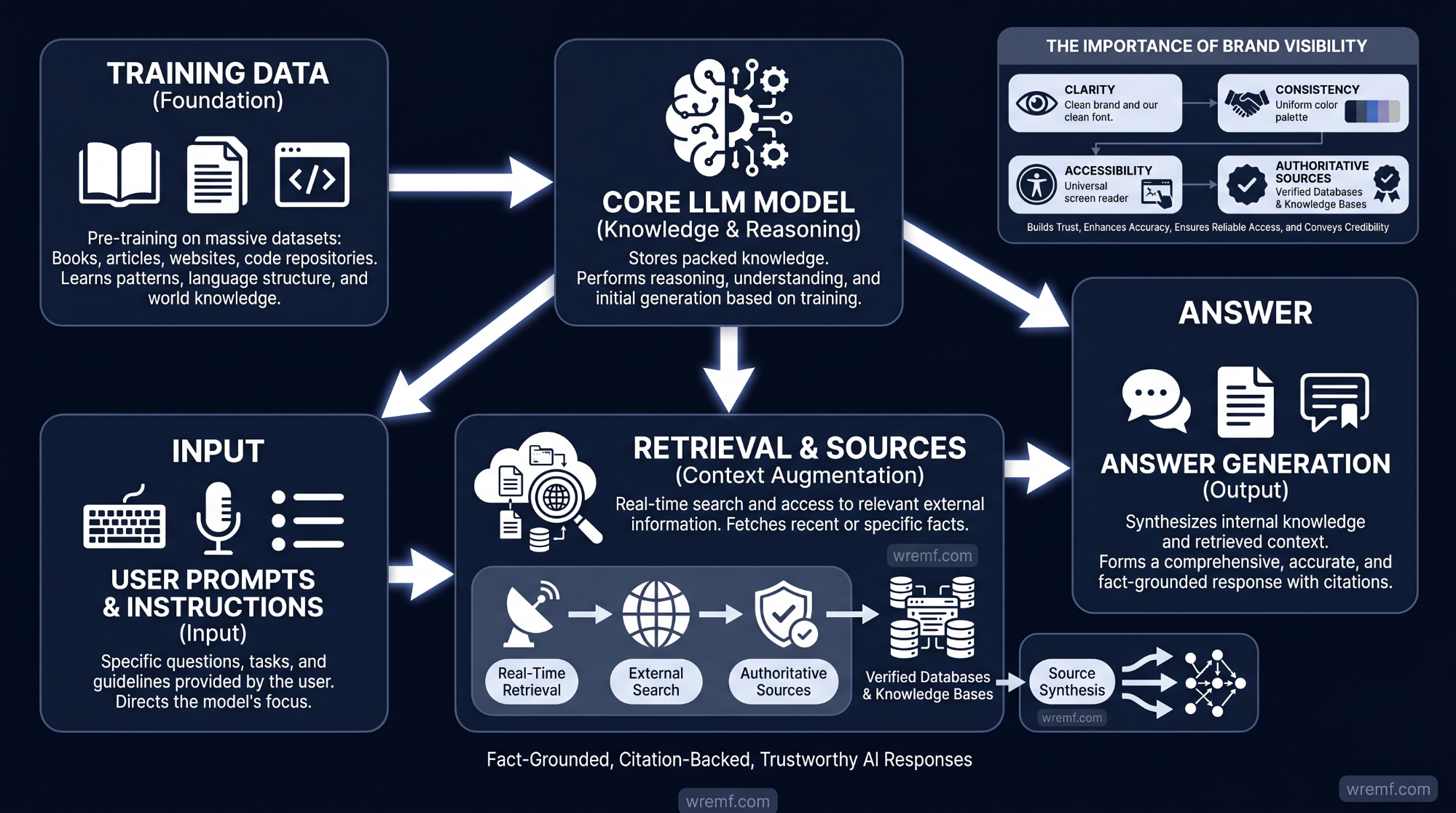

How Large Language Models Use Training Data, Retrieval, and Sources

Large language models generate answers from training data, user prompts, instructions, retrieved sources, and system design. LLM visibility improves when your brand is clear, consistent, accessible, and supported by authoritative sources.

Training data is the information used to train AI models. Training data matters because it shapes the language patterns, facts, categories, associations, and reasoning behavior that large language models learn before they retrieve newer information.

Training data can include text, code, documents, structured datasets, public web content, licensed data, human feedback, and task-specific examples. Training data quality affects how AI models handle natural language understanding, customer service questions, product descriptions, technical topics, and business applications. Poor training data can produce weak outputs, biased associations, hallucinations, or repetitive responses.

A foundation model is a large AI model trained on broad training data and adapted for many downstream tasks. A foundation model matters because model fine-tuning, prompt engineering, RAG, and product-specific AI workflows often build on top of a foundation model rather than starting from scratch.

Retrieval-Augmented Generation is a method where an AI system retrieves relevant external information before generating an answer. Retrieval-Augmented Generation matters because it helps AI systems use fresher, more specific, and more verifiable sources than model training data alone.

Vector embeddings are numerical representations of meaning. Vector embeddings matter because AI systems use them to match prompts with relevant pages, documents, topics, product descriptions, support articles, and knowledge base entries.

A knowledge graph is a structured map of entities and relationships. A knowledge graph matters because large language models need to understand how a brand, product, market, founder, category, customer use case, and authoritative sources connect.

In practical AI visibility audits, teams frequently discover that their website is only one source in a larger source ecosystem. AI systems may also use documentation, review pages, comparison articles, partner pages, public profiles, industry reports, GitHub repositories, product databases, social profiles, analyst mentions, and indexed search results.

Source consistency helps AI systems connect the same brand, product, category, claims, and use cases across different surfaces. Source consistency matters because inconsistent messaging can weaken LLM visibility, confuse AI-powered Search systems, and produce inaccurate Gemini responses, ChatGPT answers, or Perplexity summaries.

Anthropic explains that contextual retrieval can improve RAG by adding document-level context to chunks before retrieval. Anthropic reported that contextual embeddings and contextual BM25 reduced failed retrievals by 49%, and by 67% when combined with reranking, which shows why source context matters for AI systems. (Anthropic) (Anthropic)

KEY TAKEAWAY: LLM visibility depends on training data, retrieval quality, source consistency, entity clarity, and authoritative sources, not keyword density alone.

This is why content-centric LLMO focuses on semantic depth, information gain, and citation authority.

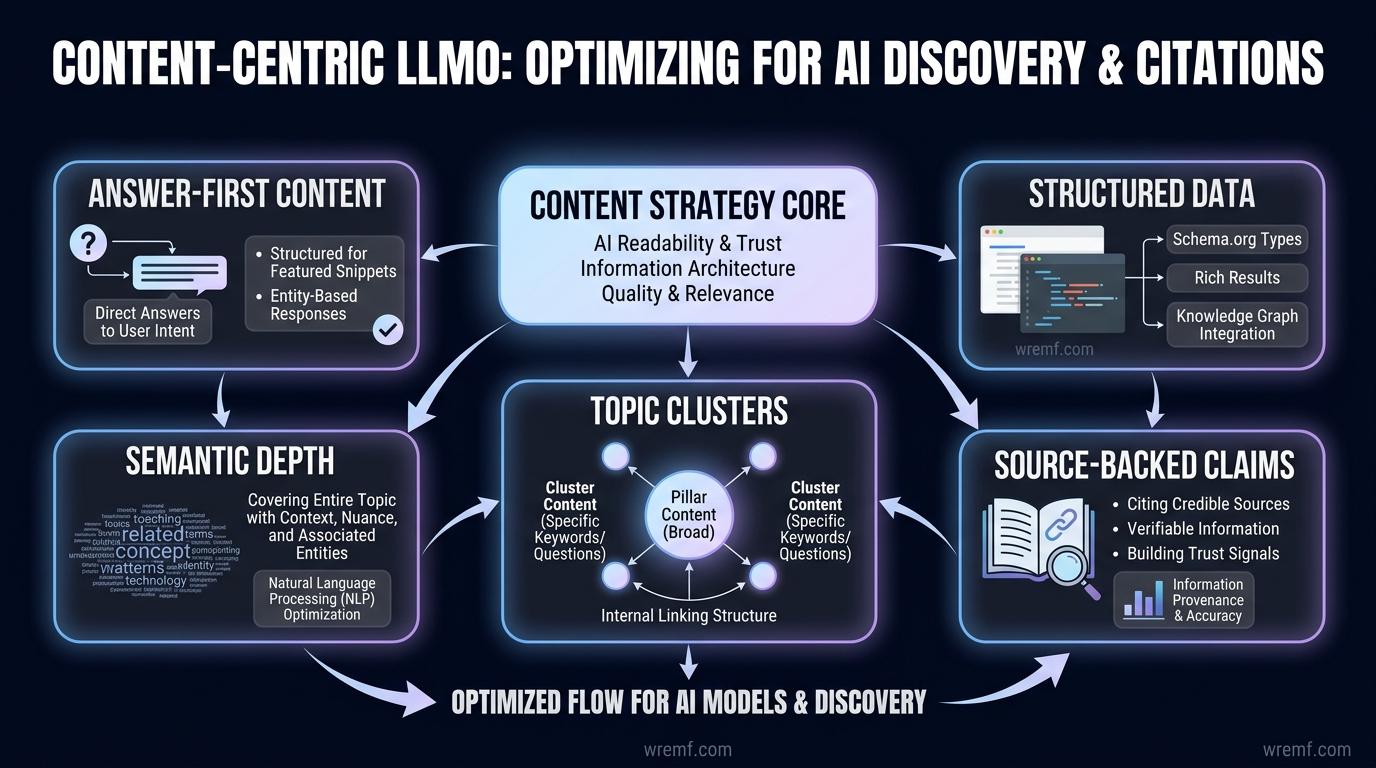

Content-Centric LLMO: Optimizing for AI Discovery and Citations

Content-centric LLMO helps AI systems understand why your brand is relevant, credible, and worth citing. The most effective AI Discovery strategy combines answer-first content, semantic depth, structured data, topic clusters, and source-backed claims.

AI Discovery is the process of being found, summarized, cited, or recommended by AI-powered Search platforms. AI Discovery matters because buyers may form an opinion about your brand before visiting your website.

AI citations are source references attached to AI-generated answers. AI citations matter because they show which sources influenced an answer and give teams a way to measure citation visibility.

Source citations are the pages, websites, documents, or references used by AI systems when generating an answer. Source citations matter because a brand can be mentioned without being cited, cited without being recommended, or recommended without receiving a click.

Brand mentions are references to your company, product, or category inside AI-generated answers. Brand mentions matter because they show whether AI systems associate your brand with relevant buyer prompts.

Semantic depth means covering a topic with enough specificity, relationships, definitions, examples, data, and supporting evidence for AI systems to understand the subject. Semantic depth matters because shallow pages often fail to add enough Information Gain for search engines or AI systems.

Information Gain is the unique value a page adds beyond what already exists in competing results. Information Gain matters because generic copycat content gives AI systems little reason to cite, summarize, or recommend your page.

A strong LLMO content strategy usually includes:

Clear answer-first definitions

Topic clusters connected through internal links

Hub-and-spoke architecture for related pages

Structured data readiness

Source-backed claims

Comparison tables

Practical workflows

Author credentials

Primary research where available

Reputable organizations cited near factual claims

Product descriptions that match real buyer language

Content audits that remove thin or duplicate pages

Content Model consistency across templates

AI-ready content briefs for repeatable execution

Content marketing for LLMO is not the same as publishing more articles. Content marketing for LLMO requires entity clarity, original examples, structured answers, and consistent source signals across the brand ecosystem.

Google Search Central explains that helpful, reliable, people-first content is more likely to perform well in Search. That matters for LLMO because AI-powered results and AI Overviews also depend on content that is accessible, useful, and easy to interpret. (Google Search Central)

AI visibility is the measurable presence of a brand inside AI-generated answers, citations, summaries, comparisons, and recommendations. AI visibility matters because AI systems can compress an entire buying journey into one answer that names vendors, compares tradeoffs, and links to sources.

If you want to see how AI engines currently describe a brand, review a sample AI visibility report before building your own measurement workflow.

KEY TAKEAWAY: Content-centric LLMO works when content adds unique value, reinforces entities, answers real buyer prompts, and gives AI systems credible sources to retrieve.

The content layer only works if AI systems can crawl, render, and interpret the pages behind it.

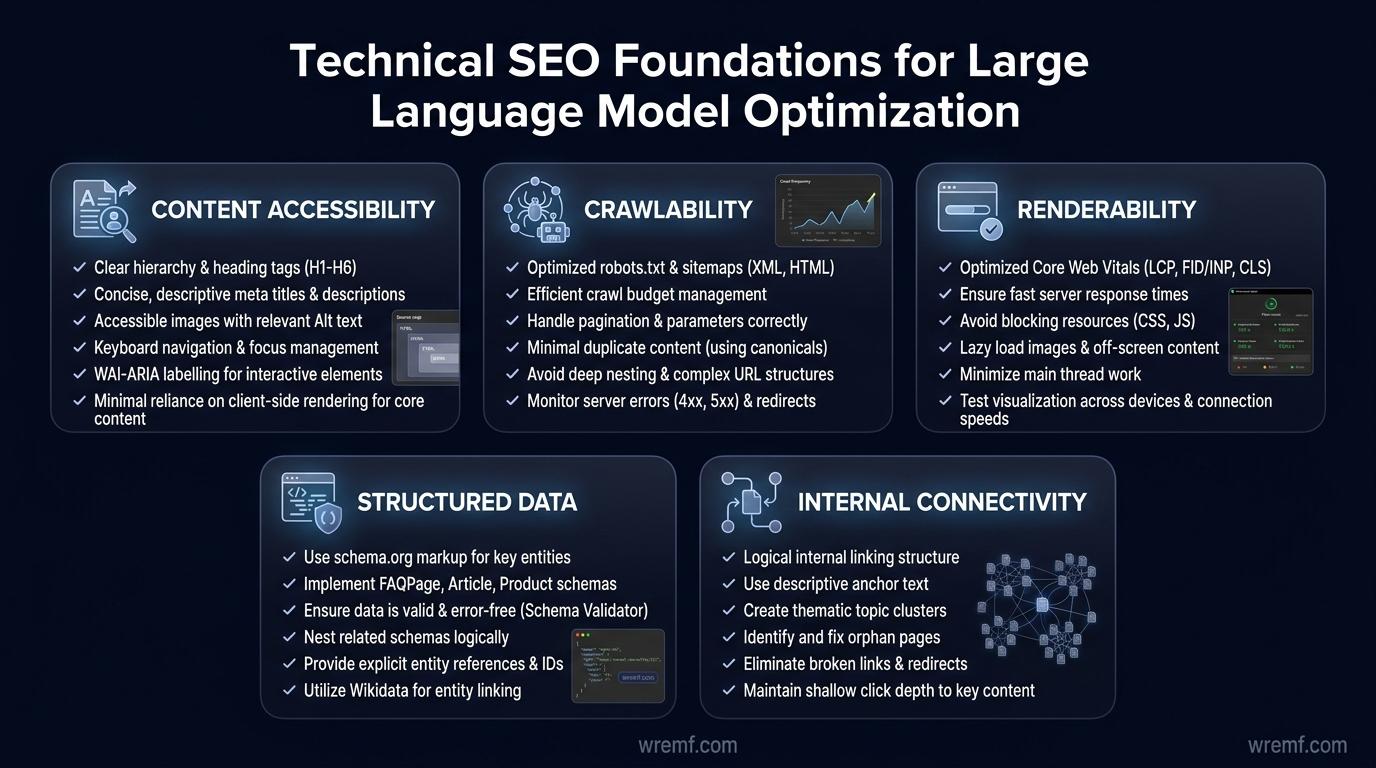

Technical SEO Foundations for Large Language Model Optimization

Technical SEO supports Large Language Model Optimization by making content accessible, crawlable, renderable, structured, and internally connected. If AI systems cannot access or interpret your pages, strong content strategy will not solve the visibility problem.

Technical SEO is the practice of improving site infrastructure so search engines and AI systems can crawl, render, index, and understand content. Technical SEO matters because access comes before interpretation.

Structured data is machine-readable markup that helps systems understand content types, organizations, products, authors, FAQs, events, reviews, and relationships. Structured data matters because it reduces ambiguity for search engines, AI Overviews, and AI Discovery systems.

JavaScript rendering is the process search systems use to understand content generated by JavaScript. JavaScript rendering matters because important content hidden behind client-side rendering may be harder for crawlers and AI systems to process.

Core Web Vitals are Google metrics related to loading performance, interactivity, and visual stability. Core Web Vitals matter because technical performance affects user experience after discovery and can influence search quality signals.

A practical LLMO technical SEO audit should check:

Can important pages be crawled?

Can content be rendered without missing answer blocks?

Are canonical tags correct?

Are key pages indexed?

Are noindex and robots.txt rules blocking useful pages?

Are headings descriptive and hierarchical?

Are internal links visible in the HTML?

Is structured data valid and relevant?

Are organization, product, service, and author entities clear?

Are comparison tables easy to extract?

Are FAQs visible in the main content?

Are important claims supported by authoritative sources?

Are templates too thin, duplicated, or programmatic without useful differences?

A common implementation mistake is treating AI visibility as a content-only problem. In real-world reporting, SEO teams frequently discover that important answer blocks are missing from rendered HTML, internal links are not crawlable, or product descriptions are inconsistent across templates.

The WREMF GEO audit helps teams evaluate crawlability, content extractability, entity clarity, citation gaps, structured data readiness, and AI answer readiness at the URL level. This is useful when teams need to move from theory to page-by-page improvement.

TIP: Start with the homepage, pricing page, category pages, comparison pages, documentation pages, product pages, and highest-impression articles before auditing the full site.

KEY TAKEAWAY: Technical SEO is the access layer of LLM optimization because AI systems need crawlable, renderable, structured, and internally linked content before they can cite or recommend it.

Once the site is technically accessible, retrieval quality becomes the next layer of LLMO.

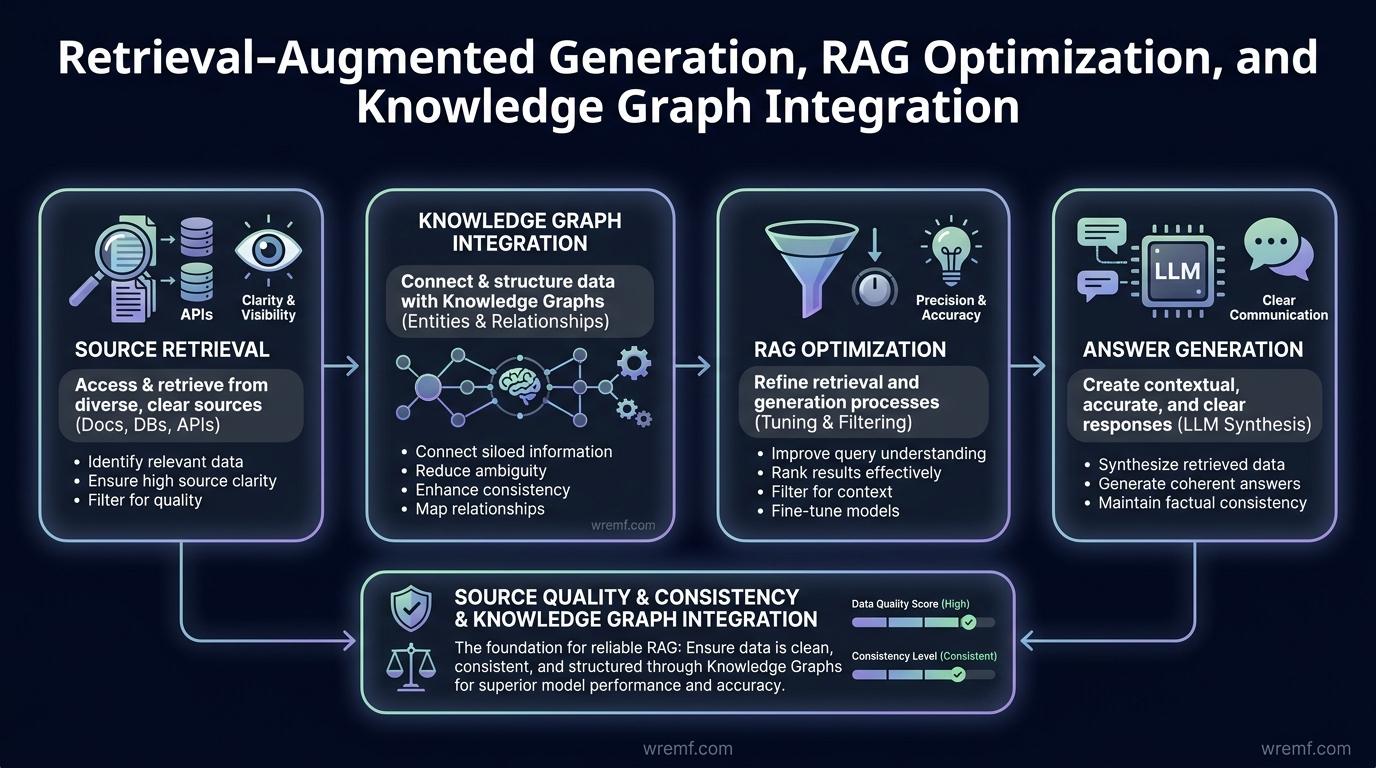

Retrieval-Augmented Generation, RAG Optimization, and Knowledge Graph Integration

Retrieval-Augmented Generation improves LLM output by retrieving relevant sources before generating an answer. For brands, RAG thinking helps explain why source quality, source consistency, and knowledge graph clarity affect AI visibility.

Retrieval-Augmented Generation is a method that connects large language models to external information during answer generation. Retrieval-Augmented Generation matters because it can reduce reliance on static model training data and improve answer grounding.

RAG optimization improves the quality of the retrieval layer. RAG optimization matters because poor retrieval can make a strong model produce weak, outdated, or inaccurate answers.

Knowledge Graph Integration connects entities such as brand, product, category, founder, market, documentation, customer use case, and proof points into a structured relationship model. Knowledge Graph Integration matters because AI systems need to understand not just words, but relationships between entities.

For enterprise AI solutions, RAG services often focus on internal data. Common use cases include customer service knowledge bases, Contact Center AI, support documentation, internal policies, sales enablement materials, product descriptions, Data management systems, and conversational AI workflows.

For marketing LLMO, RAG is useful as a source architecture model. Your public source ecosystem acts like a retrieval layer for AI Search. If your homepage says one thing, your product descriptions say another, your review pages are outdated, and your comparison pages are vague, AI systems may retrieve conflicting context.

A strong public source architecture includes:

A clear homepage positioning statement

Product pages with stable category language

Service pages that explain outcomes and deliverables

Comparison pages that explain tradeoffs

Documentation that defines capabilities

FAQ pages that answer buyer questions

Case studies or examples where available

Author and editorial signals

Internal links between related topic clusters

Structured data that reinforces entities

External authoritative sources where claims need support

RAG optimization also requires Monitoring and evaluation. Retrieval pipelines should be evaluated for source relevance, answer correctness, hallucination risk, and failure cases. The same idea applies to marketing LLMO: prompt results should be monitored over time because AI systems, sources, and competitor visibility change.

KEY TAKEAWAY: RAG thinking helps marketers understand that AI visibility depends on the quality, consistency, and retrievability of the source ecosystem.

After retrieval, the next challenge is measuring how often AI systems mention, cite, and recommend your brand.

How to Measure LLM Visibility Across AI Search Platforms

LLM visibility is measured by tracking prompts, citations, brand mentions, recommendations, competitors, source consistency, and AI traffic attribution. Rankings alone are incomplete because AI systems generate answers rather than fixed result lists.

Prompt tracking is the process of monitoring how AI systems answer specific questions over time. Prompt tracking matters because it shows whether your brand appears for high-intent prompts across ChatGPT, Claude, Gemini, Perplexity, Google AI Overviews, Copilot, DeepSeek, Grok, Meta AI, Mistral, and other AI discovery surfaces.

AI share of voice measures how often your brand appears compared with competitors across a defined prompt set. AI share of voice matters because B2B buyers often ask AI systems for vendor shortlists, comparisons, tools, alternatives, and recommendations.

AI traffic attribution connects AI discovery to website sessions, conversions, and pipeline signals. AI traffic attribution matters because leadership needs to know whether AI visibility influences targeted web traffic, product interest, or commercial demand.

Visibility scoring converts prompt results, citations, mentions, competitors, and source quality into a repeatable measurement framework. Visibility scoring matters because teams need a way to compare progress across time, engines, prompts, and markets.

The most useful LLM visibility metrics include:

| Metric | What it measures | Why it matters |

|---|---|---|

| Prompt visibility | Whether your brand appears for tracked prompts | Shows answer inclusion |

| Citation rate | How often your pages are cited | Shows source influence |

| Brand mentions | How often your brand is named | Shows category association |

| Recommendation presence | Whether AI systems recommend your brand | Shows buyer-stage influence |

| AI share of voice | Visibility versus competitors | Shows competitive position |

| Source consistency score | Whether sources describe you consistently | Shows entity clarity |

| AI traffic | Visits from detectable AI referrers | Shows downstream business impact |

| Content gap score | Missing answers or weak coverage | Shows what to improve |

| Competitor visibility | Which competitors appear and why | Shows missed positioning |

Manual testing is not enough for serious LLMO. A team can ask the same prompt in two tools and see different answers because AI systems vary by model, retrieval behavior, location, time, query phrasing, and source availability. Scheduled monitoring makes AI visibility more reliable because it controls prompts, engines, competitors, and time periods.

WREMF combines prompt intelligence, source citation tracking, competitive landscape monitoring, visibility scoring, and action recommendations. This helps teams move beyond screenshots and build an evidence-based LLMO workflow.

KEY TAKEAWAY: LLM visibility should be measured with prompts, citations, mentions, competitors, source consistency, and attribution, not traditional rank tracking alone.

Measurement is useful only when it leads to a ranked improvement workflow.

How to Improve AI Search Visibility With LLM Optimization Services

The most effective way to improve AI Search visibility is to connect measurement to a prioritized action plan. LLM optimization services should identify which prompts matter, which sources influence answers, and which fixes can improve retrieval, citation, and recommendation potential.

AI-powered Search visibility improves when AI systems can clearly identify your brand, category, audience, features, proof points, and differentiators. The goal is not to manipulate AI systems. The goal is to make accurate, useful, and source-backed information easier to retrieve.

A practical LLMO improvement workflow includes seven steps.

Define buyer prompts

Start with prompts that reflect real search behavior and buying intent. Examples include “best AI visibility tools for B2B SaaS,” “how to track brand mentions in ChatGPT,” “GEO agency for SaaS,” “Perplexity visibility services,” and “large language model optimization services for marketing teams.”

Track prompts across major LLMs

Monitor ChatGPT, Claude, Gemini, Perplexity, Google AI Overviews, Copilot, DeepSeek, Grok, Meta AI, Mistral, and other major LLMs. Different AI systems can produce different brand placements because they vary in model behavior, source access, retrieval methods, and answer formatting.

Identify cited sources

Find which pages are cited when your brand appears and when competitors appear. Citation tracking shows whether your own pages, competitor pages, review sites, documentation, or third-party articles are shaping the answer.

Fix entity clarity

Clarify your brand name, product category, audience, features, integrations, pricing, locations, services, and differentiation. Entity authority improves when the same facts appear consistently across owned sources and reputable organizations.

Build AI-ready content briefs

AI-ready content briefs should include answer-first headings, definitions, FAQs, comparison tables, source-backed claims, internal links, structured data opportunities, and entity reinforcement. The WREMF content brief workflow helps teams convert prompt and citation gaps into content plans.

Improve source consistency

Update owned sources such as homepage copy, pricing pages, documentation, comparison pages, partner pages, and public profiles. Source consistency helps AI systems resolve ambiguity and reduce inaccurate answers.

Test and report changes

Use scheduled monitoring and SEO testing to see whether visibility changes over time. The WREMF SEO testing feature supports teams that want to connect content updates and AI visibility work to measurable performance changes.

AI visibility is both a measurement problem and a source ecosystem problem. AI visibility improves when teams measure what AI systems say, then improve the sources AI systems use to produce those answers.

KEY TAKEAWAY: LLM optimization services should produce ranked actions tied to prompts, citations, source gaps, competitors, and business priorities.

The delivery model matters because software, agency services, and hybrid execution solve different problems.

Software, Agency, or Hybrid: Which LLMO Model Should You Choose?

Choose LLMO software when you need repeatable tracking, choose an agency when you need execution, and choose a hybrid model when you need both measurement and managed improvement. The best model depends on team capacity, technical depth, and reporting needs.

LLMO software is best for teams that want ongoing visibility monitoring. It helps SEO, content, and growth teams track prompts, AI citations, AI share of voice, competitor visibility, source consistency, AI traffic attribution, and reports without manually testing AI systems every week.

LLMO agency services are best for teams that need strategy, audits, content optimisation, entity and authority building, citation improvement, internal linking logic, schema guidance, crawl and rendering checks, digital PR support, monthly reporting, and execution. Agency support is especially useful when internal teams lack time to convert insights into published improvements.

A hybrid LLMO model combines software with managed execution. This is often the best fit for B2B SaaS companies, agencies, consultants, and growth teams that need both data and implementation.

| Model | Best for | What you get | Main limitation | Recommended when |

|---|---|---|---|---|

| Software | In-house SEO and marketing teams | Tracking, dashboards, alerts, reports | Requires internal execution | You have team capacity |

| Agency | Brands needing strategy and execution | Audits, briefs, fixes, reporting, consulting | Less self-serve control | You need senior-led support |

| Hybrid | Growth teams and agencies | Software plus managed execution | Requires clear priorities | You need measurement and action |

| Manual testing | Early exploration | Low-cost learning | Not scalable or reliable | You are validating demand |

WREMF can be used as software, agency service, or combined software plus managed execution. For teams that need consulting and implementation, the WREMF agency team supports AI visibility strategy, GEO consulting, AEO consulting, content optimisation, source consistency cleanup, citation improvement, technical foundations, and monthly execution.

For buying-stage evaluation, the WREMF pricing page includes Starter, Growth, and Enterprise plans. Starter supports one website, Growth supports five websites, and Enterprise supports unlimited websites, unlimited prompt tracking, custom branded portals, unlimited seats, and dedicated support.

IMPORTANT: Avoid providers that guarantee AI citations, rankings, revenue, or traffic. AI visibility can be measured and improved, but no vendor controls every model, retrieval system, source, or algorithm shift.

KEY TAKEAWAY: Software is best for repeatable measurement, agency support is best for execution, and hybrid LLMO is best when teams need both insight and implementation.

The next decision is whether your problem is marketing visibility or technical model performance.

Technical LLM Optimization Services for AI Performance and Efficiency

Technical LLM optimization services improve the cost, speed, accuracy, safety, and reliability of AI applications. These services are most relevant when a company builds, deploys, or operates AI models, not only when it wants better AI Search visibility.

LLMOps is the operating discipline for managing large language models across development, model deployment, Monitoring and evaluation, governance, and improvement. LLMOps matters because production AI systems need reliability, cost control, security, compliance, and continuous evaluation.

Large language model operations include prompt management, version control, evaluation datasets, retrieval monitoring, feedback loops, latency tracking, hallucination checks, model deployment workflows, model fine-tuning pipelines, and Security and compliance controls.

MLOps is the broader practice of managing machine learning systems. MLOps matters because LLMOps extends traditional machine learning operations with prompt evaluation, RAG monitoring, model behavior testing, and token cost management.

Model training is the process of teaching AI models from training data. Model training matters because the quality, coverage, and governance of training data influence model behavior, natural language understanding, output consistency, and domain reliability.

Model deployment is the process of making an AI model available in production. Model deployment matters because infrastructure, latency, access control, monitoring, and compliance determine whether AI systems can serve real users.

Technical LLM optimization techniques include:

Model fine-tuning for domain-specific behavior

Quantization to reduce memory and compute needs

Distillation to transfer behavior into smaller AI models

Pruning to reduce model size

Request batching to improve throughput

KV caching to reuse attention data

Speculative inference to accelerate generation

Distributed training for large-scale training workloads

Decode phases optimization for response generation

Retrieval evaluation for RAG systems

Monitoring and evaluation for production reliability

Security and compliance controls for enterprise AI solutions

Cloud platforms such as Vertex AI, Vertex AI Agent Builder, Hugging Face, Nvidia AI, Google DeepMind ecosystems, Databricks AI, and Apache Spark are often part of technical LLM optimization workflows. These platforms support model training, model deployment, data processing, agent building, infrastructure scaling, and AI application governance. Google Cloud describes Vertex AI as a platform for training and deploying machine learning models and AI applications. (Google Cloud Vertex AI)

KEY TAKEAWAY: Technical LLM optimization is essential for AI applications, but it should not be confused with LLMO services focused on AI Discovery, citations, and brand visibility.

Many companies need alignment between CTO and CMO teams because both sides depend on clean data and trusted sources.

How CTO and CMO Teams Should Align on Large Language Model Optimization

CTO and CMO teams should align LLM optimization around accurate AI responses, efficient systems, trusted sources, measurable visibility, and business impact. LLMO fails when engineering and marketing solve isolated problems.

For CTO teams, the focus is usually infrastructure, model deployment, latency, LLM inference cost, Data management, Security and compliance, RAG quality, model fine-tuning, and Monitoring and evaluation. These teams need AI systems that are reliable, governed, scalable, and cost-efficient.

For CMO teams, the focus is AI Search visibility, content marketing, AI Overviews, brand exposure, source citations, digital PR, structured data, topic clusters, semantic depth, competitor visibility, and targeted web traffic. These teams need buyers and AI systems to understand the brand accurately.

The overlap is source quality. Engineering teams need clean data for RAG, customer support, Contact Center AI, and conversational AI. Marketing teams need clear public sources for AI Discovery, brand placements, product descriptions, and reputation management. Both teams benefit from structured data, knowledge graph clarity, consistent source architecture, and trusted content.

A shared LLMO scorecard can include:

| Metric category | CTO view | CMO view |

|---|---|---|

| Accuracy | Better retrieval and fewer hallucinations | More accurate brand descriptions |

| Efficiency | Lower inference cost and lower latency | Faster content and reporting workflows |

| Visibility | Reliable source access | More citations and recommendations |

| Governance | Security and compliance | Consistent claims and approved messaging |

| Business value | Lower operating cost | Better demand capture and pipeline influence |

In practical AI visibility audits, the best projects often combine both perspectives. Documentation improvements can help customer support AI, improve natural language understanding, strengthen product-led content, support sales enablement, and improve source citations for AI Search.

The WREMF methodology connects prompts, citations, competitors, source consistency, AI traffic attribution, and recommendations into one repeatable system. This helps marketing, SEO, growth, and agency teams explain LLMO in business terms without ignoring technical constraints.

KEY TAKEAWAY: LLM optimization works best when technical teams improve AI system quality and marketing teams improve source quality, entity clarity, and AI visibility.

That alignment becomes more important as AI agents and multimodal search reshape discovery.

The Future of LLMO: Agentic Workflows, AI Overviews, and Multimodal Search

The future of LLMO is moving toward agentic workflows, multimodal search, machine-readable data, and automated reporting. Brands will need sources that AI agents can retrieve, compare, evaluate, and act on.

AI Overviews are AI-generated search summaries that provide key information and links for deeper exploration. AI Overviews matter because they can influence discovery before a user clicks a traditional organic result.

Search Generative Experience was Google’s earlier generative search experiment that helped introduce AI-powered results and later AI Overviews. Search Generative Experience matters because it showed the transition from classic search results toward AI-generated summaries.

Agentic workflows are AI-driven processes where agents plan, retrieve information, compare options, complete tasks, and report outcomes. Agentic workflows matter because AI discovery may shift from “find a vendor” to “shortlist vendors, compare pricing, check sources, and contact the best fit.”

Multimodal search expands discovery beyond text into voice, images, video, documents, and structured product data. For content strategy, this means brands need consistent text, structured data, product descriptions, documentation, and proof points that AI systems can interpret across formats.

The rise of AI agents increases the value of machine-readable data structures. A messy website may still be understandable to a human, but AI agents need clear pages, stable navigation, explicit entities, structured data, and reliable sources to complete tasks accurately.

Automated reporting and predictive trend analysis will also become more important. As algorithm shifts happen across AI systems, teams will need monitoring workflows that detect visibility changes, competitor movements, citation changes, and source consistency issues before traffic or pipeline impact becomes obvious.

KEY TAKEAWAY: Future-ready LLMO requires clear sources, structured entities, measurable AI visibility, and content that AI systems can retrieve across text, search, and agentic workflows.

Before choosing a provider, teams should understand the myths that often lead to poor LLMO decisions.

Common Myths About AI Visibility Debunked

AI visibility is measurable, improvable, and connected to SEO, but it is not the same as traditional rankings. The biggest mistakes come from treating LLMO as magic, pure SEO, or pure engineering.

MYTH: SEO, AEO, GEO, and LLMO are all the same thing.

FACT: SEO focuses on search engine visibility, AEO focuses on answer extraction, GEO focuses on generative AI citations and recommendations, and LLMO can include both model optimization and brand visibility. The overlap is real, but the metrics are different. A strong strategy connects all four instead of replacing one with another.

MYTH: AI visibility is impossible to measure.

FACT: AI visibility can be measured through prompt tracking, source citations, brand mentions, recommendation presence, AI share of voice, competitor visibility, source consistency, and AI traffic attribution. The measurement is probabilistic because AI systems change, but scheduled tracking is more reliable than manual screenshots.

MYTH: Rankings are enough if your SEO is strong.

FACT: Rankings still matter, but AI systems may cite sources that are not in the top organic position, summarize several sources, or mention competitors even when your page ranks well. AI Overviews, ChatGPT Search, Perplexity, Gemini responses, and other AI-powered results make citations, source quality, and entity clarity separate measurement layers.

MYTH: LLM optimization is only for companies building AI models.

FACT: Technical LLM optimization matters for companies building AI applications, but brand-focused LLMO matters for any company that wants to be found in AI Search. B2B SaaS brands, agencies, consultants, eCommerce store owners, and content teams can use LLMO without training their own foundation model.

MYTH: More content automatically improves LLM visibility.

FACT: More content only helps when it improves semantic depth, Information Gain, source consistency, and answer usefulness. Copycat articles, duplicated product descriptions, weak topic clusters, and vague claims can dilute AI visibility instead of improving it.

KEY TAKEAWAY: LLMO is not a replacement for SEO or a guaranteed citation system. It is a measurable discipline for improving how AI systems understand, retrieve, cite, and recommend your brand.

The final selection step is knowing how to evaluate large language model optimization services providers.

How to Choose a Large Language Model Optimization Services Provider

Choose a large language model optimization services provider based on the problem you need solved: model performance, AI visibility, retrieval quality, or managed execution. The best provider should show methodology, metrics, limitations, and clear deliverables.

A good LLMO provider should be able to explain:

Which AI systems they track

Which prompts they monitor

How they identify AI citations and source citations

How they compare competitor visibility

How they distinguish brand mentions from recommendations

How they evaluate source consistency

How they connect AI visibility to traffic or pipeline

How they prioritize content, technical, and source fixes

How they report progress over time

What they cannot guarantee

For technical LLMO, evaluate experience with model fine-tuning, LLM inference, cloud platforms, vector embeddings, RAG, LLMOps, Security and compliance, Data management, training data, model deployment, and Monitoring and evaluation.

For marketing LLMO, evaluate experience with SEO services, Answer Engine Optimization, AI Search, AI Overviews, content strategy, structured data, knowledge graph clarity, source citations, digital PR, reputation management, content audits, content briefs, and AI share of voice.

Agencies managing multiple clients often need white-label reporting, client portals, repeatable audits, scheduled monitoring, and clear action plans. In-house brands often need visibility scoring, prompt tracking, competitor monitoring, content briefs, and executive reporting.

WREMF helps B2B teams track, improve, and prove AI visibility across major AI discovery surfaces. The platform supports software use cases, the WREMF agency model supports managed execution, and the WREMF API supports integrations, MCP workflows, custom reporting, and technical workflows.

| Provider type | Best for | What to verify before buying |

|---|---|---|

| Technical AI consultancy | Model performance and AI infrastructure | Engineering depth, deployment experience, security process |

| SEO or content agency | Content strategy and organic visibility | AEO, GEO, structured data, citation tracking capability |

| AI visibility platform | Repeatable measurement and reporting | Engines tracked, prompt workflows, citation data, reporting |

| Hybrid LLMO partner | Measurement plus execution | Clear deliverables, methodology, pricing, and ownership |

KEY TAKEAWAY: The right LLMO provider should match your real need, show measurable workflows, and turn AI visibility data into practical improvements without promising guaranteed outcomes.

The most common questions about LLMO are answered below.

Frequently Asked Questions

What are large language model optimization services?

Large language model optimization services improve how large language models perform, retrieve information, cite sources, and represent brands in AI-generated answers. In technical contexts, these services include model fine-tuning, inference efficiency, LLMOps, RAG, vector embeddings, and model deployment. In marketing contexts, they include AI visibility tracking, prompt intelligence, source citations, Answer Engine Optimization, Generative Engine Optimization, structured data, content strategy, and competitor visibility. WREMF focuses on the brand visibility side while connecting insights to practical content, citation, and reporting workflows.

What is Large Language Model Optimization?

Large Language Model Optimization is the process of improving how language models generate, retrieve, evaluate, cite, and present information. The term can refer to technical optimization, such as reducing latency or improving inference efficiency, or marketing optimization, such as improving AI Search visibility and citations. The best definition depends on the use case. A CTO may use LLM optimization to reduce infrastructure costs. A CMO may use LLM optimization to improve brand visibility across ChatGPT, Claude, Gemini, Perplexity, and Google AI Overviews.

Why is the definition of LLMO tricky?

The definition of LLMO is tricky because it sits between machine learning, search, content strategy, and digital marketing. Technical teams often use LLMO to mean model fine-tuning, LLMOps, inference efficiency, and RAG. Marketing teams often use LLMO to mean AI Search visibility, AI citations, Answer Engine Optimization, Generative Engine Optimization, and source consistency. Both meanings are valid. The important step is clarifying whether the goal is better AI system performance, better brand visibility, or both.

What is the difference between LLMO and SEO?

LLMO improves how AI systems understand, cite, and recommend a brand, while SEO improves visibility in search engines. SEO usually measures rankings, impressions, clicks, Core Web Vitals, and organic conversions. LLMO measures prompt visibility, AI citations, brand mentions, source consistency, AI share of voice, and recommendation presence. The two disciplines overlap because both need accessible, helpful, structured content. The main difference is that LLMO optimizes for generated answers, not only ranked links.

How is LLMO different from Answer Engine Optimization?

Answer Engine Optimization focuses on helping systems extract direct answers from content. LLMO is broader because it can include AEO, GEO, prompt tracking, AI citations, competitor visibility, RAG thinking, source consistency, and technical model performance. AEO is useful for FAQs, definitions, featured snippets, and direct answer blocks. LLMO is useful when a brand needs to understand how AI systems mention, cite, compare, and recommend it across multiple AI discovery surfaces.

How is LLMO different from Generative Engine Optimization?

Generative Engine Optimization focuses on visibility inside generative AI answers, including citations, summaries, recommendations, and brand placements. LLMO can include GEO but also covers technical LLM performance, RAG optimization, LLMOps, model fine-tuning, and inference efficiency. For marketing teams, GEO is often the most relevant part of LLMO. For engineering teams, model deployment and inference optimization may be more important. A complete strategy separates these tracks instead of mixing every task into one vague service.

Can you optimize for RAG-based large language models?

Yes, you can optimize for RAG-based large language models by improving the quality, structure, consistency, and retrievability of the sources they use. For private enterprise AI systems, this means improving knowledge bases, embeddings, chunking, metadata, reranking, and evaluation. For public AI Search visibility, this means improving owned pages, documentation, product descriptions, structured data, comparison content, and trusted third-party sources. You cannot control every retrieval system, but you can improve the source ecosystem AI systems are likely to retrieve.

What is LLMOps?

LLMOps means large language model operations. It covers the practices used to deploy, monitor, evaluate, govern, and improve large language models in production. LLMOps can include prompt management, model deployment, retrieval monitoring, hallucination testing, feedback loops, cost tracking, Security and compliance, and Monitoring and evaluation. LLMOps is most important for companies operating AI applications. Marketing teams may not need full LLMOps unless they also manage AI products, chatbots, or internal AI systems.

What is the difference between LLMOps and MLOps?

MLOps is the broader discipline for managing machine learning systems, while LLMOps focuses specifically on large language models. MLOps includes data pipelines, model training, model deployment, monitoring, and reliability. LLMOps adds prompt evaluation, RAG monitoring, token cost management, hallucination checks, model behavior testing, and AI safety concerns. In practice, LLMOps extends MLOps for Generative AI systems. For marketing visibility, prompt tracking and source citation monitoring are usually more relevant than full LLMOps infrastructure.

What is Retrieval-Augmented Generation in LLM optimization?

Retrieval-Augmented Generation is a method where an AI system retrieves relevant external information before generating an answer. In technical LLM optimization, RAG helps enterprise AI systems use private documents, customer service content, product data, and internal knowledge bases. In marketing LLMO, RAG is useful as a source ecosystem model because public AI Search systems also depend on retrievable sources. Clear, consistent, authoritative sources make it easier for AI systems to produce accurate answers about a brand.

How do AI citations differ from brand mentions?

AI citations are source references that support an AI-generated answer, while brand mentions are references to a brand inside the response text. A brand can be mentioned without being cited, cited without being recommended, or recommended without receiving traffic. That is why LLM visibility measurement should separate citations, mentions, recommendations, and traffic attribution. Source citation tracking shows which pages influence answers. Brand mention tracking shows whether AI systems associate your company with relevant prompts.

Do large language model optimization services actually improve AI visibility?

Large language model optimization services can improve AI visibility when they are tied to measurable prompts, source citations, competitor gaps, and content improvements. They should not be judged by vague claims or one-time screenshots. Effective LLMO identifies which questions buyers ask, how AI systems answer them, which sources are cited, and what changes can improve entity clarity or citation authority. No provider should guarantee AI recommendations, but disciplined measurement and source improvement can make AI visibility more manageable.

How much do LLM optimization services cost?

LLM optimization services vary widely because technical model optimization and marketing LLMO are different service categories. Technical LLMO can involve engineering, cloud platforms, infrastructure, model deployment, Security and compliance, and custom AI development. Marketing LLMO can involve software subscriptions, audits, content briefs, managed execution, and reporting. WREMF pricing starts with Starter at €39 per month for one website and Growth at €89 per month for five websites, with Enterprise plans for unlimited websites, custom branded portals, unlimited seats, and dedicated support.

Should a B2B company hire an LLMO agency or use software?

A B2B company should use LLMO software when it has internal capacity to act on insights, and hire an agency when it needs strategy, content execution, technical audits, or reporting support. A hybrid model is often best when leadership wants measurable AI visibility but the team lacks time to turn findings into briefs, page updates, internal links, source consistency fixes, and reporting. WREMF supports software, agency, and hybrid workflows, so brands and agencies can choose the operating model that fits their team.

What should an LLMO audit include?

An LLMO audit should include prompt visibility, AI citations, competitor visibility, source consistency, entity clarity, technical crawlability, structured data readiness, content gaps, and attribution opportunities. It should identify which prompts matter most commercially and which pages or sources need improvement. A useful audit does not stop at diagnosis. It should rank actions by likely impact, effort, and business relevance. WREMF’s GEO audit and methodology are designed to turn AI visibility findings into a practical improvement plan.

Which companies need large language model optimization services?

Companies need large language model optimization services when buyers, customers, or employees use AI systems to research, compare, summarize, or answer questions about their market. B2B SaaS companies, agencies, consultants, eCommerce stores, customer support teams, enterprise AI teams, and content marketing teams can all benefit. The right service depends on the problem. A company building AI systems may need model fine-tuning or LLMOps. A company trying to appear in AI Search may need prompt tracking, AEO, GEO, citations, and source consistency work.

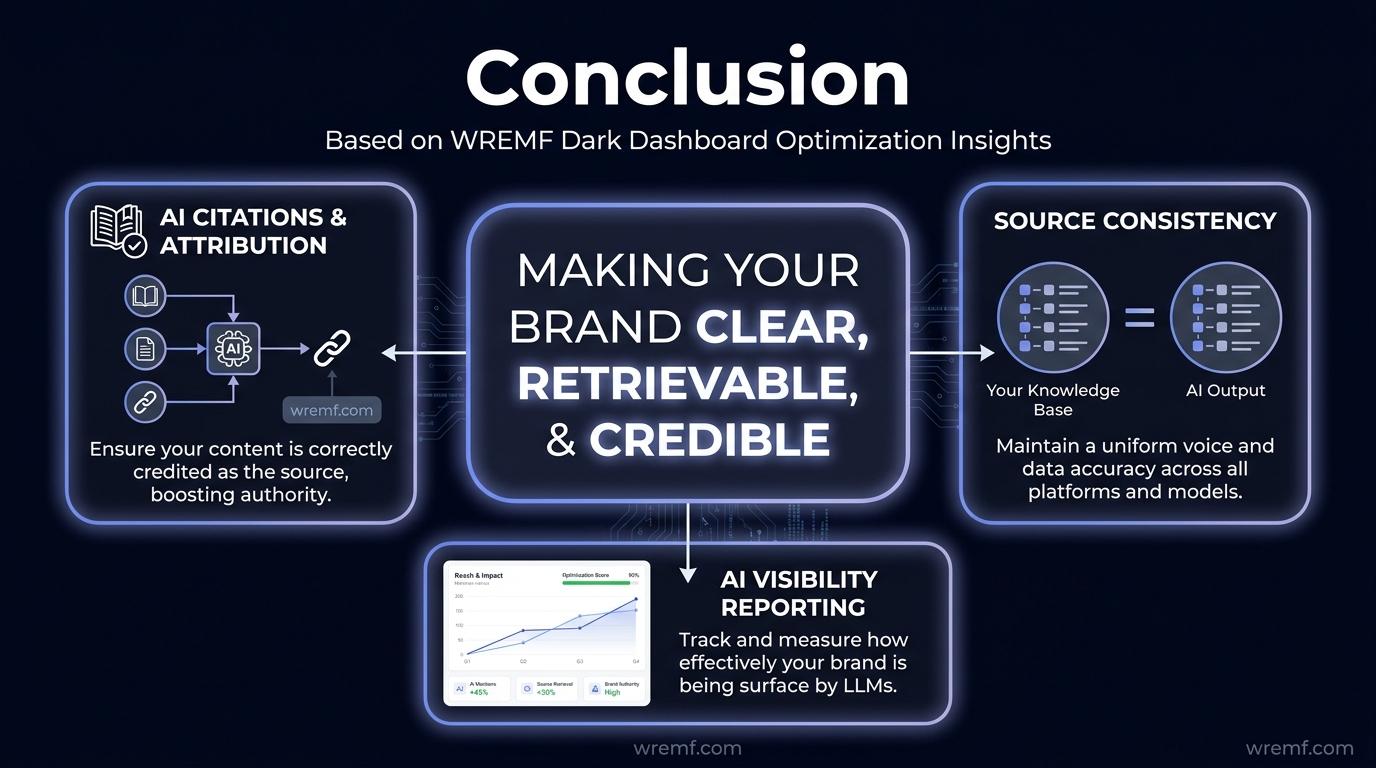

Conclusion

Large language model optimization services matter because AI systems now shape how buyers discover, compare, and trust brands. The discipline includes technical LLM performance, Retrieval-Augmented Generation, LLMOps, Answer Engine Optimization, Generative Engine Optimization, AI citations, source consistency, content strategy, and AI visibility reporting. The strongest approach is not to chase keywords alone, but to make your brand clear, retrievable, credible, and measurable across major AI discovery surfaces. To turn LLMO from a guessing game into a repeatable workflow, explore the WREMF platform suite or talk to the WREMF agency team.

Related Generative Engine Optimization Guides

- LLM SEO Agency The Complete Guide to Choosing an Agency for AI Search Visibility

- AI SEO Agency How to Choose the Right Partner for AI Search Visibility

- LLM SEO Services The Complete 2026 Guide to AI Search Visibility, AEO, GEO, and LLM Optimization

- Generative AI Optimization Services The Complete Guide to GEO, AEO, LLM Optimization, and AI Visibility

- Answer Engine Optimization The Complete Guide to AEO, AI Search Visibility, and Answer-First Content

- AI Overview Optimization How to Rank, Get Cited, and Stay Visible in Google AI Search

- AI SEO Tools The Complete Guide for SEO, AEO, GEO, and AI Search Visibility

- Answer Engine Optimization Services The Complete Guide to AI Search Visibility

- Enterprise Answer Engine Optimization Platforms Complete Guide for AI Visibility, AEO, and GEO

- AI Overview SEO How to Optimize for Google AI Overviews, AI Mode, and AI Search Visibility

- AI SEO Services The Complete Guide to Search Visibility in the AI Era

- AI Search Engine Optimization Services The Complete Guide for B2B Brands

- Best Answer Engine Optimization for Enhancing AI Visibility

- AI Brand Monitoring The Complete Guide to Tracking Brand Visibility Across AI Search, LLMs, and Generative Engines

- AI Mention Tracking The Complete Guide to Monitoring Brand Mentions, AI Answers, Citations, and Share of Voice in 2026

Entities Covered

- ChatGPT

- Claude

- Gemini

- Perplexity

- Google AI Overviews

- Copilot

- DeepSeek

- Grok

- Meta AI

- Mistral

- Retrieval-Augmented Generation

- Answer Engine Optimization

- Generative Engine Optimization

- LLMOps

- Knowledge Graph Integration

- Vector Embeddings

- Foundation Model

- Model Fine-Tuning

- Inference Efficiency

- Structured Data

- Core Web Vitals

- Source Consistency

- AI Discovery

- Brand Mentions

- Information Gain

- Semantic Depth

Mentions

Brands mentioned

- WREMF

- OpenAI

- Anthropic

- Gartner

- NVIDIA

Tools mentioned

- ChatGPT

- Claude

- Gemini

- Perplexity

- Google AI Overviews

- Copilot

- DeepSeek

- Grok

- Meta AI

- Mistral

- ChatGPT Search

- Google Search Central

Sources

- https://www.google.com/url?q=https://www.gartner.com/en/newsroom/press-releases/2024-02-19-gartner-predicts-search-engine-volume-will-drop-25-percent-by-2026-due-to-ai-chatbots-and-other-virtual-agents?utm_source%3Dchatgpt.com&sa=D&source=editors&ust=1778180427411215&usg=AOvVaw3w878uOOrtC3uQEQFT3_Qz

- https://www.google.com/url?q=https://wremf.com/suite&sa=D&source=editors&ust=1778180427415093&usg=AOvVaw0nJZ8mm16uNJK61FWV7Jum

- https://www.google.com/url?q=https://wremf.com/suite&sa=D&source=editors&ust=1778180427415241&usg=AOvVaw14_Lwj9e0iJL5_n3rM2JPQ

- https://www.google.com/url?q=https://developers.google.com/search/docs/appearance/ai-features&sa=D&source=editors&ust=1778180427426708&usg=AOvVaw3ZPFY66HJzg9kqQmFMEp-2

- https://www.google.com/url?q=https://developers.google.com/search/docs/appearance/ai-features?utm_source%3Dchatgpt.com&sa=D&source=editors&ust=1778180427427114&usg=AOvVaw3zXDvuRZFuz0nbn_qF-tWu

- https://www.google.com/url?q=https://support.google.com/websearch/answer/14901683?hl%3Den&sa=D&source=editors&ust=1778180427442392&usg=AOvVaw2eAjrtpoAHO6QNN2qTKhKW

- https://www.google.com/url?q=https://help.openai.com/en/articles/9237897-chatgpt-search&sa=D&source=editors&ust=1778180427442640&usg=AOvVaw3yD0Ft0PCo_f5LE_eK0J-P

- https://www.google.com/url?q=https://help.openai.com/en/articles/9237897-chatgpt-search&sa=D&source=editors&ust=1778180427442854&usg=AOvVaw2-fxlTjIUH07bsCmsmjcCa

- https://www.google.com/url?q=https://support.google.com/websearch/answer/14901683?hl%3Den%26utm_source%3Dchatgpt.com&sa=D&source=editors&ust=1778180427443145&usg=AOvVaw0TFYgqScpwJ2sa73E-fxJu

- https://www.google.com/url?q=https://developer.nvidia.com/blog/introducing-new-kv-cache-reuse-optimizations-in-nvidia-tensorrt-llm/&sa=D&source=editors&ust=1778180427459654&usg=AOvVaw3D4EDtrqNWU_Ae65p7Lmpe

- https://www.google.com/url?q=https://www.anthropic.com/news/contextual-retrieval?utm_source%3Dchatgpt.com&sa=D&source=editors&ust=1778180427459973&usg=AOvVaw0Rpe4GH9UgQdTA_lQqc1J5

- https://www.google.com/url?q=https://www.anthropic.com/news/contextual-retrieval&sa=D&source=editors&ust=1778180427469651&usg=AOvVaw3GmIweIQVm0FUb61XVm5-r

- https://www.google.com/url?q=https://www.anthropic.com/news/contextual-retrieval?utm_source%3Dchatgpt.com&sa=D&source=editors&ust=1778180427469958&usg=AOvVaw1JEjV7xyykI7Lagl53jF_v

- https://www.google.com/url?q=https://developers.google.com/search/docs/fundamentals/creating-helpful-content&sa=D&source=editors&ust=1778180427476961&usg=AOvVaw1p0cPDqPjLrm_RakAZf22E

- https://www.google.com/url?q=https://wremf.com/sample-report&sa=D&source=editors&ust=1778180427477735&usg=AOvVaw1fPqcok2BY-s1Y56aHxinw

- https://www.google.com/url?q=https://wremf.com/sample-report&sa=D&source=editors&ust=1778180427477858&usg=AOvVaw2IXfq7vusrz5AmxWV_FeSQ

- https://www.google.com/url?q=https://wremf.com/features/geo-audit&sa=D&source=editors&ust=1778180427482115&usg=AOvVaw0-p4RtXfVtqwuIOweg5J-S

- https://www.google.com/url?q=https://wremf.com/features/geo-audit&sa=D&source=editors&ust=1778180427482235&usg=AOvVaw1HCljFG5wwm_PMHOvpKW5x

- https://www.google.com/url?q=https://wremf.com/suite/prompt-intelligence&sa=D&source=editors&ust=1778180427498372&usg=AOvVaw195NNCpomZOc4ypEHawt90

- https://www.google.com/url?q=https://wremf.com/suite/prompt-intelligence&sa=D&source=editors&ust=1778180427498537&usg=AOvVaw2vPZZZ51w42e_gdAX37YL_

- https://www.google.com/url?q=https://wremf.com/suite/source-citations&sa=D&source=editors&ust=1778180427498646&usg=AOvVaw0DL-0CZJpwvwz_1E4mnCz2

- https://www.google.com/url?q=https://wremf.com/suite/source-citations&sa=D&source=editors&ust=1778180427498737&usg=AOvVaw3z9xAE9-dcgzWS894FXDLw

- https://www.google.com/url?q=https://wremf.com/suite/competitive-landscape&sa=D&source=editors&ust=1778180427498827&usg=AOvVaw15pR8uqYUSntyBlLTQoS__

- https://www.google.com/url?q=https://wremf.com/suite/competitive-landscape&sa=D&source=editors&ust=1778180427498959&usg=AOvVaw02Kjx2eK0yM0qHTkVjm0QW

- https://www.google.com/url?q=https://wremf.com/features/content-briefs&sa=D&source=editors&ust=1778180427504321&usg=AOvVaw1gTGL3HaZqxyV1N7k_uNEz

- https://www.google.com/url?q=https://wremf.com/features/content-briefs&sa=D&source=editors&ust=1778180427504558&usg=AOvVaw3nEifqtnUBYl-Na5ntNYGL

- https://www.google.com/url?q=https://wremf.com/features/seo-testing&sa=D&source=editors&ust=1778180427506100&usg=AOvVaw3aAioTw4OoAMAiwNLSrFOf

- https://www.google.com/url?q=https://wremf.com/features/seo-testing&sa=D&source=editors&ust=1778180427506309&usg=AOvVaw03jWa-geRIaWatTcUMcEFv

- https://www.google.com/url?q=https://wremf.com/agency&sa=D&source=editors&ust=1778180427521467&usg=AOvVaw0flqln87FNU0Lt90ByumoU

- https://www.google.com/url?q=https://wremf.com/agency&sa=D&source=editors&ust=1778180427521690&usg=AOvVaw3nLTlwN_uPul6-5a0hja2w

- https://www.google.com/url?q=https://wremf.com/pricing&sa=D&source=editors&ust=1778180427522299&usg=AOvVaw0EELVTmt3sb7U_VFMfExM7

- https://www.google.com/url?q=https://wremf.com/pricing&sa=D&source=editors&ust=1778180427522433&usg=AOvVaw20FfY9LVet7SuPiKGm2lm1

- https://www.google.com/url?q=https://cloud.google.com/vertex-ai/docs&sa=D&source=editors&ust=1778180427531181&usg=AOvVaw0_FNXQzhzsYySxt2s9x21w

- https://www.google.com/url?q=https://wremf.com/methodology&sa=D&source=editors&ust=1778180427543825&usg=AOvVaw0gL_rotjnD6fAs_0S2LDUW

- https://www.google.com/url?q=https://wremf.com/methodology&sa=D&source=editors&ust=1778180427544080&usg=AOvVaw1OY9weGcD48ukA_soKeMi0

- https://www.google.com/url?q=https://wremf.com/agency&sa=D&source=editors&ust=1778180427565446&usg=AOvVaw1XWjQCB40pqgMHWYsg0fE9

- https://www.google.com/url?q=https://wremf.com/agency&sa=D&source=editors&ust=1778180427565708&usg=AOvVaw0lXa5cAsc-U-trP7m_CYbD

- https://www.google.com/url?q=https://wremf.com/api&sa=D&source=editors&ust=1778180427565998&usg=AOvVaw2CDVw3aqaRibLGezDll8zS

- https://www.google.com/url?q=https://wremf.com/api&sa=D&source=editors&ust=1778180427566152&usg=AOvVaw1K3nfIZx9PYkOMPtw1fW3l

- https://www.google.com/url?q=https://wremf.com/suite&sa=D&source=editors&ust=1778180427589586&usg=AOvVaw1nDWPUMn7YTrcaXyuedEs6

- https://www.google.com/url?q=https://wremf.com/suite&sa=D&source=editors&ust=1778180427589766&usg=AOvVaw31mX7sL4bKf7pL10qa5hAa

- https://www.google.com/url?q=https://wremf.com/agency&sa=D&source=editors&ust=1778180427589864&usg=AOvVaw24qca5rD4KqSXDy0BIKbpg

- https://www.google.com/url?q=https://wremf.com/agency&sa=D&source=editors&ust=1778180427589940&usg=AOvVaw24WchVI6ud2EED0JJGABQz

Frequently Asked Questions

What are large language model optimization services?

Large language model optimization services improve how large language models perform, retrieve information, cite sources, and represent brands in AI-generated answers. In technical contexts, these services include model fine-tuning, inference efficiency, LLMOps, RAG, vector embeddings, and model deployment. In marketing contexts, they include AI visibility tracking, prompt intelligence, source citations, Answer Engine Optimization, Generative Engine Optimization, structured data, content strategy, and competitor visibility. WREMF focuses on the brand visibility side while connecting insights t

What is Large Language Model Optimization?

Large Language Model Optimization is the process of improving how language models generate, retrieve, evaluate, cite, and present information. The term can refer to technical optimization, such as reducing latency or improving inference efficiency, or marketing optimization, such as improving AI Search visibility and citations. The best definition depends on the use case. A CTO may use LLM optimization to reduce infrastructure costs. A CMO may use LLM optimization to improve brand visibility across ChatGPT, Claude, Gemini, Perplexity, and Google AI Overviews.

Why is the definition of LLMO tricky?

The definition of LLMO is tricky because it sits between machine learning, search, content strategy, and digital marketing. Technical teams often use LLMO to mean model fine-tuning, LLMOps, inference efficiency, and RAG. Marketing teams often use LLMO to mean AI Search visibility, AI citations, Answer Engine Optimization, Generative Engine Optimization, and source consistency. Both meanings are valid. The important step is clarifying whether the goal is better AI system performance, better brand visibility, or both.

What is the difference between LLMO and SEO?

LLMO improves how AI systems understand, cite, and recommend a brand, while SEO improves visibility in search engines. SEO usually measures rankings, impressions, clicks, Core Web Vitals, and organic conversions. LLMO measures prompt visibility, AI citations, brand mentions, source consistency, AI share of voice, and recommendation presence. The two disciplines overlap because both need accessible, helpful, structured content. The main difference is that LLMO optimizes for generated answers, not only ranked links.

How is LLMO different from Answer Engine Optimization?

Answer Engine Optimization focuses on helping systems extract direct answers from content. LLMO is broader because it can include AEO, GEO, prompt tracking, AI citations, competitor visibility, RAG thinking, source consistency, and technical model performance. AEO is useful for FAQs, definitions, featured snippets, and direct answer blocks. LLMO is useful when a brand needs to understand how AI systems mention, cite, compare, and recommend it across multiple AI discovery surfaces.

How is LLMO different from Generative Engine Optimization?

Generative Engine Optimization focuses on visibility inside generative AI answers, including citations, summaries, recommendations, and brand placements. LLMO can include GEO but also covers technical LLM performance, RAG optimization, LLMOps, model fine-tuning, and inference efficiency. For marketing teams, GEO is often the most relevant part of LLMO. For engineering teams, model deployment and inference optimization may be more important. A complete strategy separates these tracks instead of mixing every task into one vague service.

Can you optimize for RAG-based large language models?

Yes, you can optimize for RAG-based large language models by improving the quality, structure, consistency, and retrievability of the sources they use. For private enterprise AI systems, this means improving knowledge bases, embeddings, chunking, metadata, reranking, and evaluation. For public AI Search visibility, this means improving owned pages, documentation, product descriptions, structured data, comparison content, and trusted third-party sources. You cannot control every retrieval system, but you can improve the source ecosystem AI systems are likely to retrieve.

What is LLMOps?

LLMOps means large language model operations. It covers the practices used to deploy, monitor, evaluate, govern, and improve large language models in production. LLMOps can include prompt management, model deployment, retrieval monitoring, hallucination testing, feedback loops, cost tracking, Security and compliance, and Monitoring and evaluation. LLMOps is most important for companies operating AI applications. Marketing teams may not need full LLMOps unless they also manage AI products, chatbots, or internal AI systems.

What is the difference between LLMOps and MLOps?

MLOps is the broader discipline for managing machine learning systems, while LLMOps focuses specifically on large language models. MLOps includes data pipelines, model training, model deployment, monitoring, and reliability. LLMOps adds prompt evaluation, RAG monitoring, token cost management, hallucination checks, model behavior testing, and AI safety concerns. In practice, LLMOps extends MLOps for Generative AI systems. For marketing visibility, prompt tracking and source citation monitoring are usually more relevant than full LLMOps infrastructure.

What is Retrieval-Augmented Generation in LLM optimization?

Retrieval-Augmented Generation is a method where an AI system retrieves relevant external information before generating an answer. In technical LLM optimization, RAG helps enterprise AI systems use private documents, customer service content, product data, and internal knowledge bases. In marketing LLMO, RAG is useful as a source ecosystem model because public AI Search systems also depend on retrievable sources. Clear, consistent, authoritative sources make it easier for AI systems to produce accurate answers about a brand.

How do AI citations differ from brand mentions?

AI citations are source references that support an AI-generated answer, while brand mentions are references to a brand inside the response text. A brand can be mentioned without being cited, cited without being recommended, or recommended without receiving traffic. That is why LLM visibility measurement should separate citations, mentions, recommendations, and traffic attribution. Source citation tracking shows which pages influence answers. Brand mention tracking shows whether AI systems associate your company with relevant prompts.

Do large language model optimization services actually improve AI visibility?

Large language model optimization services can improve AI visibility when they are tied to measurable prompts, source citations, competitor gaps, and content improvements. They should not be judged by vague claims or one-time screenshots. Effective LLMO identifies which questions buyers ask, how AI systems answer them, which sources are cited, and what changes can improve entity clarity or citation authority. No provider should guarantee AI recommendations, but disciplined measurement and source improvement can make AI visibility more manageable.

How much do LLM optimization services cost?

LLM optimization services vary widely because technical model optimization and marketing LLMO are different service categories. Technical LLMO can involve engineering, cloud platforms, infrastructure, model deployment, Security and compliance, and custom AI development. Marketing LLMO can involve software subscriptions, audits, content briefs, managed execution, and reporting. WREMF pricing starts with Starter at €39 per month for one website and Growth at €89 per month for five websites, with Enterprise plans for unlimited websites, custom branded portals, unlimited seats, and dedicated suppo

Should a B2B company hire an LLMO agency or use software?

A B2B company should use LLMO software when it has internal capacity to act on insights, and hire an agency when it needs strategy, content execution, technical audits, or reporting support. A hybrid model is often best when leadership wants measurable AI visibility but the team lacks time to turn findings into briefs, page updates, internal links, source consistency fixes, and reporting. WREMF supports software, agency, and hybrid workflows, so brands and agencies can choose the operating model that fits their team.

What should an LLMO audit include?

An LLMO audit should include prompt visibility, AI citations, competitor visibility, source consistency, entity clarity, technical crawlability, structured data readiness, content gaps, and attribution opportunities. It should identify which prompts matter most commercially and which pages or sources need improvement. A useful audit does not stop at diagnosis. It should rank actions by likely impact, effort, and business relevance. WREMF’s GEO audit and methodology are designed to turn AI visibility findings into a practical improvement plan.

Which companies need large language model optimization services?

Companies need large language model optimization services when buyers, customers, or employees use AI systems to research, compare, summarize, or answer questions about their market. B2B SaaS companies, agencies, consultants, eCommerce stores, customer support teams, enterprise AI teams, and content marketing teams can all benefit. The right service depends on the problem. A company building AI systems may need model fine-tuning or LLMOps. A company trying to appear in AI Search may need prompt tracking, AEO, GEO, citations, and source consistency work.

Reviewed by

Rohan Singh

Related articles

- Answer Engine Optimization Services: The Complete Guide to AI Search Visibility

- Generative AI Optimization Services: The Complete Guide to GEO, AEO, LLM Optimization, and AI Visibility

- AI Mention Tracking: The Complete Guide to Monitoring Brand Mentions, AI Answers, Citations, and Share of Voice in 2026

- AI Brand Monitoring: The Complete Guide to Tracking Brand Visibility Across AI Search, LLMs, and Generative Engines

- AI Overview SEO: How to Optimize for Google AI Overviews, AI Mode, and AI Search Visibility

- LLM SEO Services: The Complete 2026 Guide to AI Search Visibility, AEO, GEO, and LLM Optimization

Cite this article

"Large Language Model Optimization Services: The Complete Guide to LLMO, AI Search Visibility, AEO, GEO, RAG, and LLM Performance" by WREMF Team, WREMF (2026). https://wremf.com/blog/large-language-model-optimization-services-the-complete-guide-to-llmo-ai-search-visibility-aeo-geo-rag-and-llm-performance