By WREMF Team · 2026-05-09 · 51 min read

Last reviewed: 2026-05-09 by Rohan Singh

Learn how enterprise AEO platforms measure and improve brand visibility across ChatGPT, Claude, Gemini, Perplexity, and Google AI Overviews using citations and prompts.

Key Takeaways

- Enterprise AEO platforms measure brand visibility across AI-generated answers, citations, and recommendations, not just traditional search rankings.

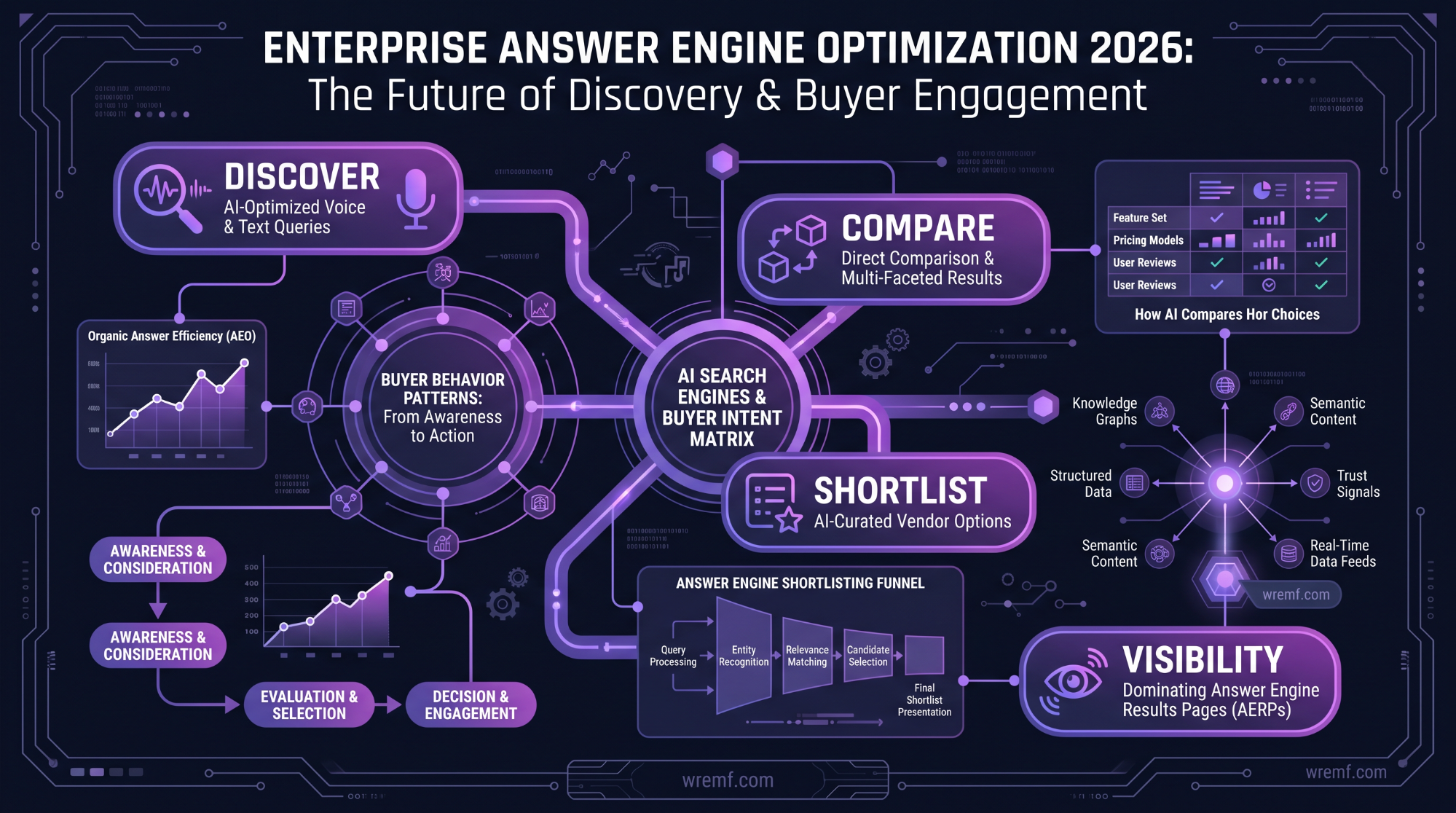

- AI search is changing B2B discovery as buyers use AI assistants to compare vendors, evaluate risks, and build shortlists before visiting websites.

- SEO, answer engine optimization, and Generative Engine Optimization work best as connected layers with technical SEO supporting answer-first structure and AI visibility measurement.

- Essential AEO metrics include prompt coverage, brand mention frequency, citation tracking, Answer Share of Voice, source consistency, and AI traffic attribution.

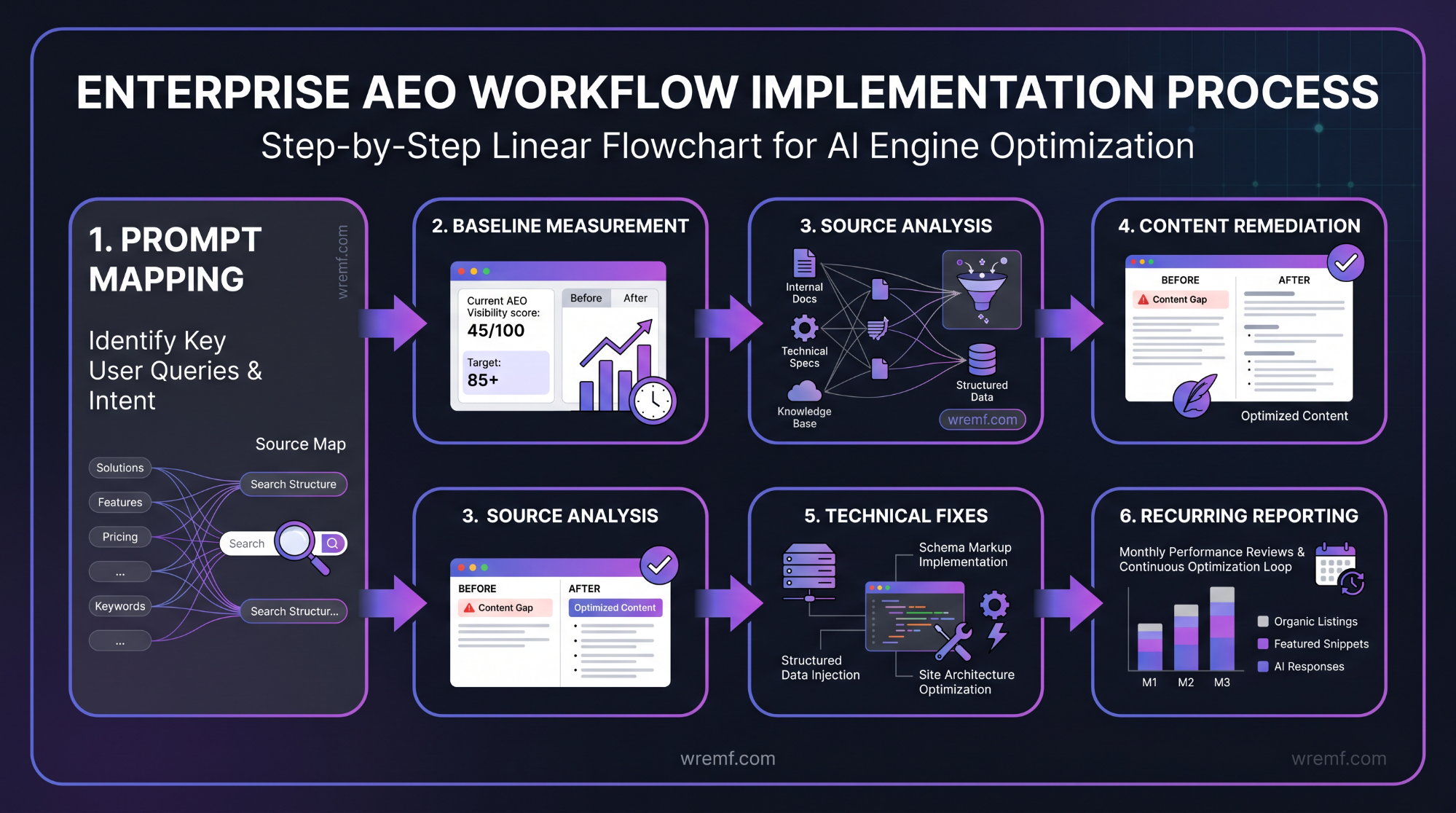

- Effective AEO implementation requires prompt mapping, citation analysis, content gap remediation, source consistency cleanup, and recurring reporting workflows.

- Answer-first content structure, technical SEO, structured data, entity clarity, and trust signals create the foundation for reliable AI visibility.

Enterprise Answer Engine Optimization Platforms: Complete Guide for AI Visibility, AEO, and GEO

Enterprise answer engine optimization platforms help large organizations measure and improve brand visibility inside AI-generated answers. Google, OpenAI, Microsoft, Anthropic, and Perplexity have pushed search toward AI answers, citations, summaries, and conversational discovery. This guide explains answer engine optimization, Generative Engine Optimization, AI visibility, prompt tracking, citation tracking, structured data, technical SEO, content strategy, and platform selection. It also shows how WREMF helps B2B teams track, improve, and prove visibility across ChatGPT, Claude, Gemini, Perplexity, Google AI Overviews, Copilot, DeepSeek, Grok, Meta AI, and Mistral. Use this guide to choose the right enterprise AEO workflow, platform model, and measurement system.

What Are Enterprise Answer Engine Optimization Platforms?

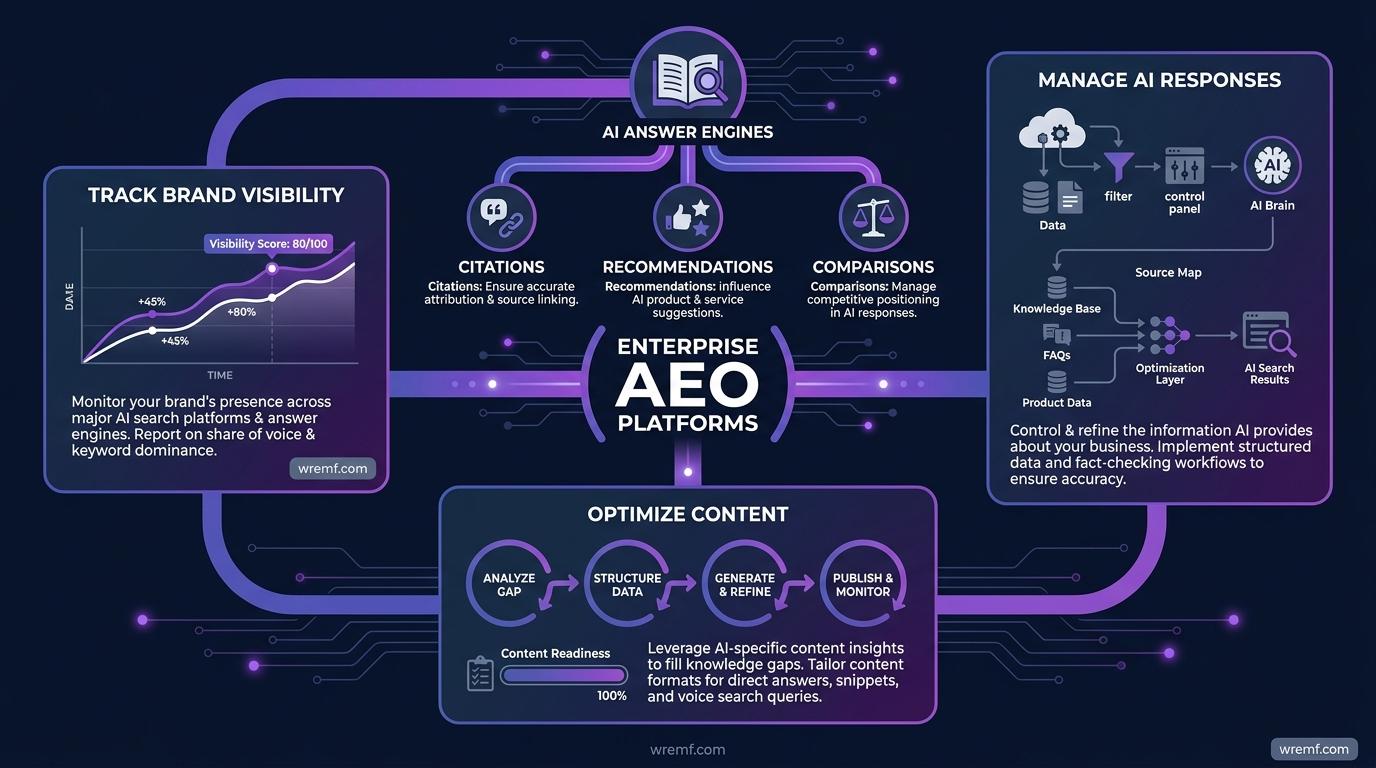

Enterprise answer engine optimization platforms are software systems that track how brands appear in AI answers, citations, recommendations, and comparisons. They help enterprise teams manage AI visibility across answer engines at scale.

Answer engine optimization is the practice of improving content, entities, technical SEO, structured data, citations, and trust signals so answer engines can understand and reference a brand accurately. Answer engine optimization matters because buyers increasingly ask AI assistants for recommendations before visiting vendor websites.

Enterprise answer engine optimization platforms are different from traditional SEO tools because they measure AI responses, brand mention frequency, citation tracking, competitor visibility, Answer Share of Voice, and AI traffic attribution. Traditional SEO tools usually focus on Google rankings, backlinks, keyword positions, and referral traffic. Enterprise AEO platforms focus on how AI systems describe a brand inside generated answers.

AI visibility is the measurable presence of a brand inside AI-generated answers, recommendations, citations, summaries, and comparisons. AI visibility matters because B2B buyers can discover, evaluate, or exclude vendors inside AI interfaces before clicking a website, filling out a form, or speaking with sales.

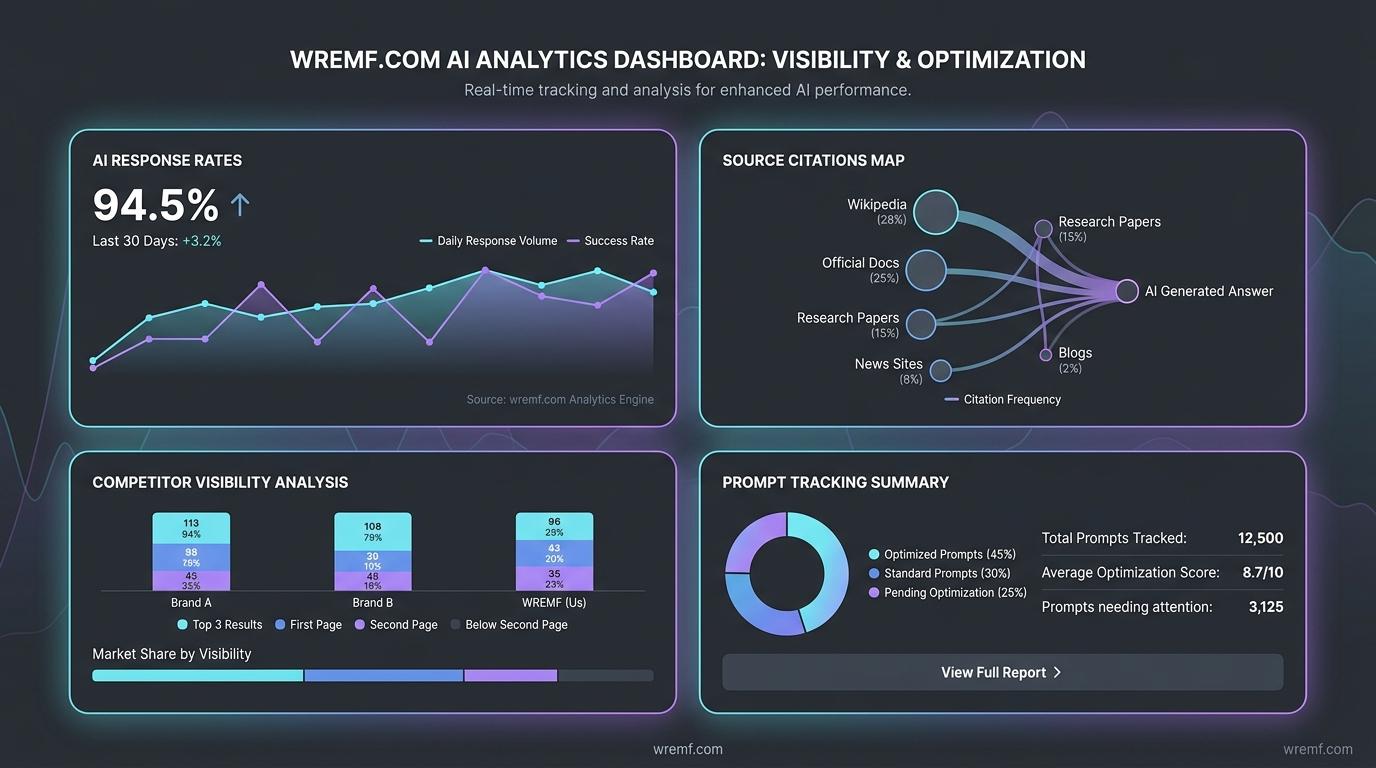

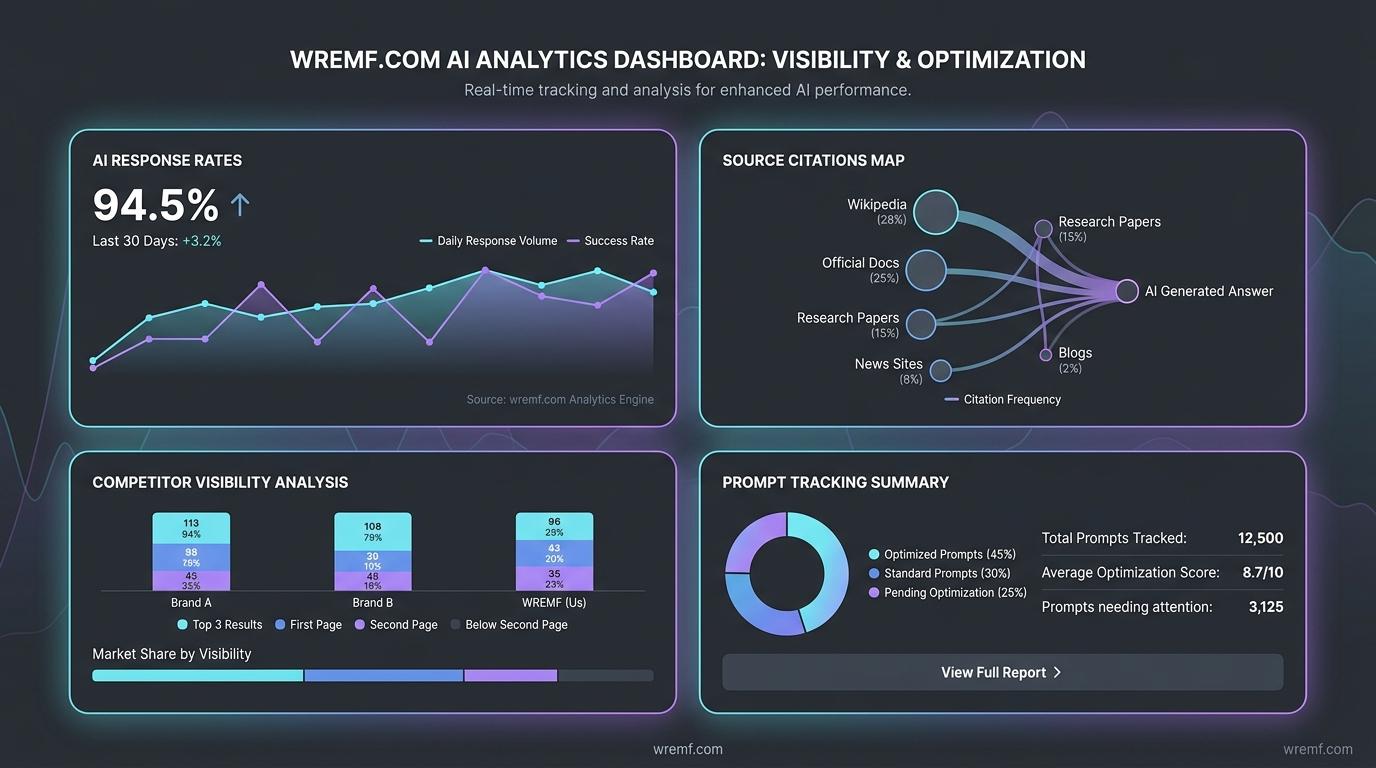

WREMF helps teams track, improve, and prove AI visibility across 10 AI engines through prompt intelligence, source citations, competitor visibility, AI share of voice, AI traffic attribution, GEO audits, content briefs, scheduled monitoring, white-label reporting, and API workflows. Teams that want a practical platform view can explore the WREMF platform suite as a starting point.

DID YOU KNOW: OpenAI introduced ChatGPT search on October 31, 2024 and described it as a way to get timely answers with links to relevant web sources through a natural language interface, which shows why citations now matter inside AI search.

KEY TAKEAWAY: Enterprise answer engine optimization platforms help brands measure and improve visibility across answer engines, not just traditional search results.

The next step is understanding why answer engine optimization has become a core enterprise search priority.

Why Answer Engine Optimization Matters for Enterprises in 2026

Answer engine optimization matters because AI search changes how buyers discover, compare, and shortlist brands. Enterprise teams need visibility inside AI responses, citations, summaries, recommendations, and search engines.

AI search is search behavior inside AI assistants, AI search engines, conversational platforms, AI interfaces, smart speakers, and generative engines. AI search matters because the user may receive a synthesized answer before deciding whether to visit a traditional website.

Google AI Overviews are AI-generated search experiences that summarize information and link to supporting web sources. Google’s official guidance on AI features and your website explains AI Overviews and AI Mode from a site owner perspective, which matters because enterprise content now has to support both classic search and AI-generated answers.

AI assistants such as ChatGPT, Claude, Gemini, Perplexity, Google Gemini, Bing Copilot, and Microsoft Copilot Chat are becoming discovery environments. Microsoft states that Copilot Chat is AI chat grounded in data from the web and powered by large language models in its Microsoft 365 Copilot Chat overview. This means enterprise buyers can use AI systems to compare software, summarize vendor claims, check implementation risks, and evaluate alternatives.

In real B2B buying journeys, the first brand interaction may not happen on a landing page. A buyer may ask, “What are the best enterprise answer engine optimization platforms?” or “Which AEO tools track ChatGPT and Google AI Overviews?” If your brand is absent from those AI responses, competitors may shape the buyer’s shortlist before your website is visited.

AI visibility is both a measurement problem and a source ecosystem problem. Marketing teams must know whether AI systems mention the brand, which sources AI systems cite, whether competitor names appear more often, whether source consistency is strong, and whether AI-driven referral traffic appears in analytics.

IMPORTANT: Treat AI search visibility as an enterprise discovery layer, not as a replacement for SEO, paid media, partner marketing, or sales enablement.

KEY TAKEAWAY: Answer engine optimization matters because enterprise discovery now happens across AI responses, citations, summaries, recommendations, voice assistants, smart speakers, and traditional search engines.

To build the right strategy, teams must understand how SEO, AEO, and Generative Engine Optimization fit together.

SEO vs AEO vs Generative Engine Optimization

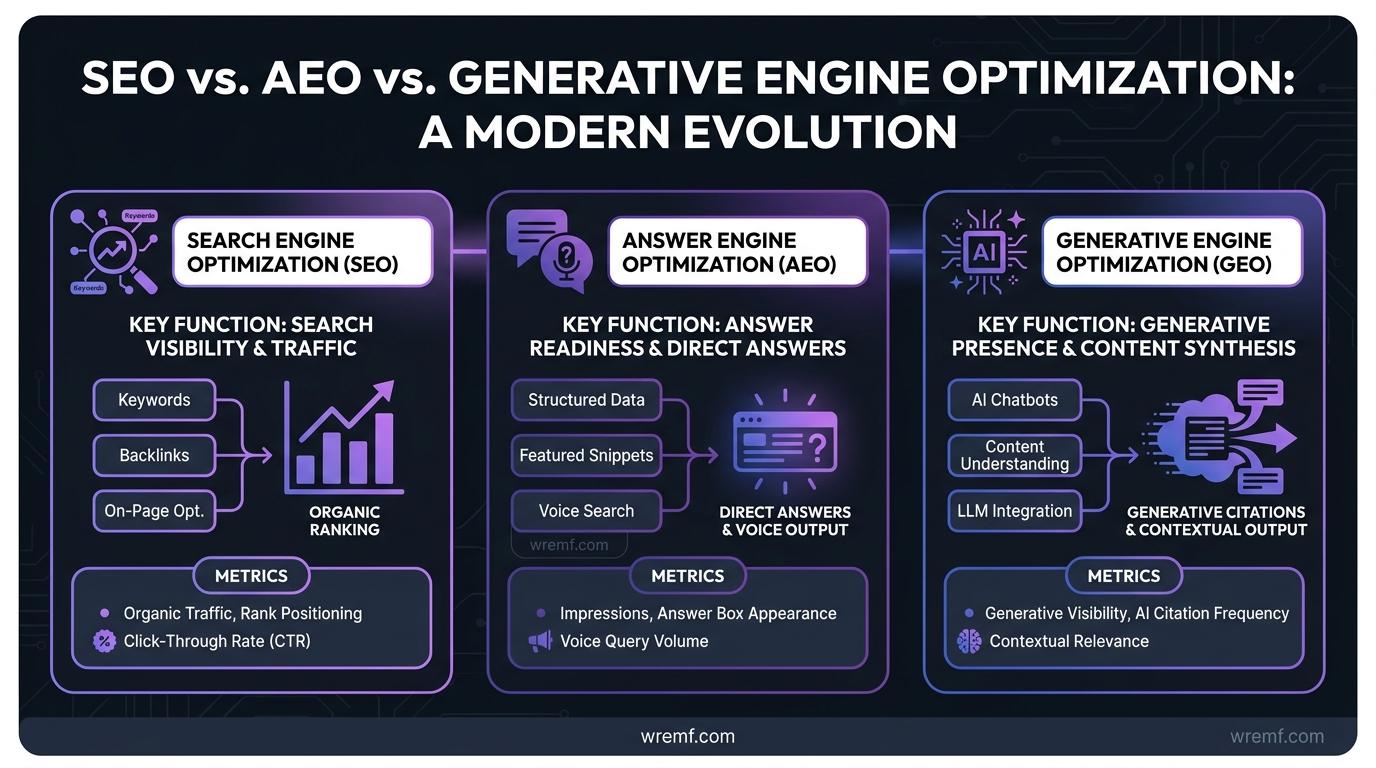

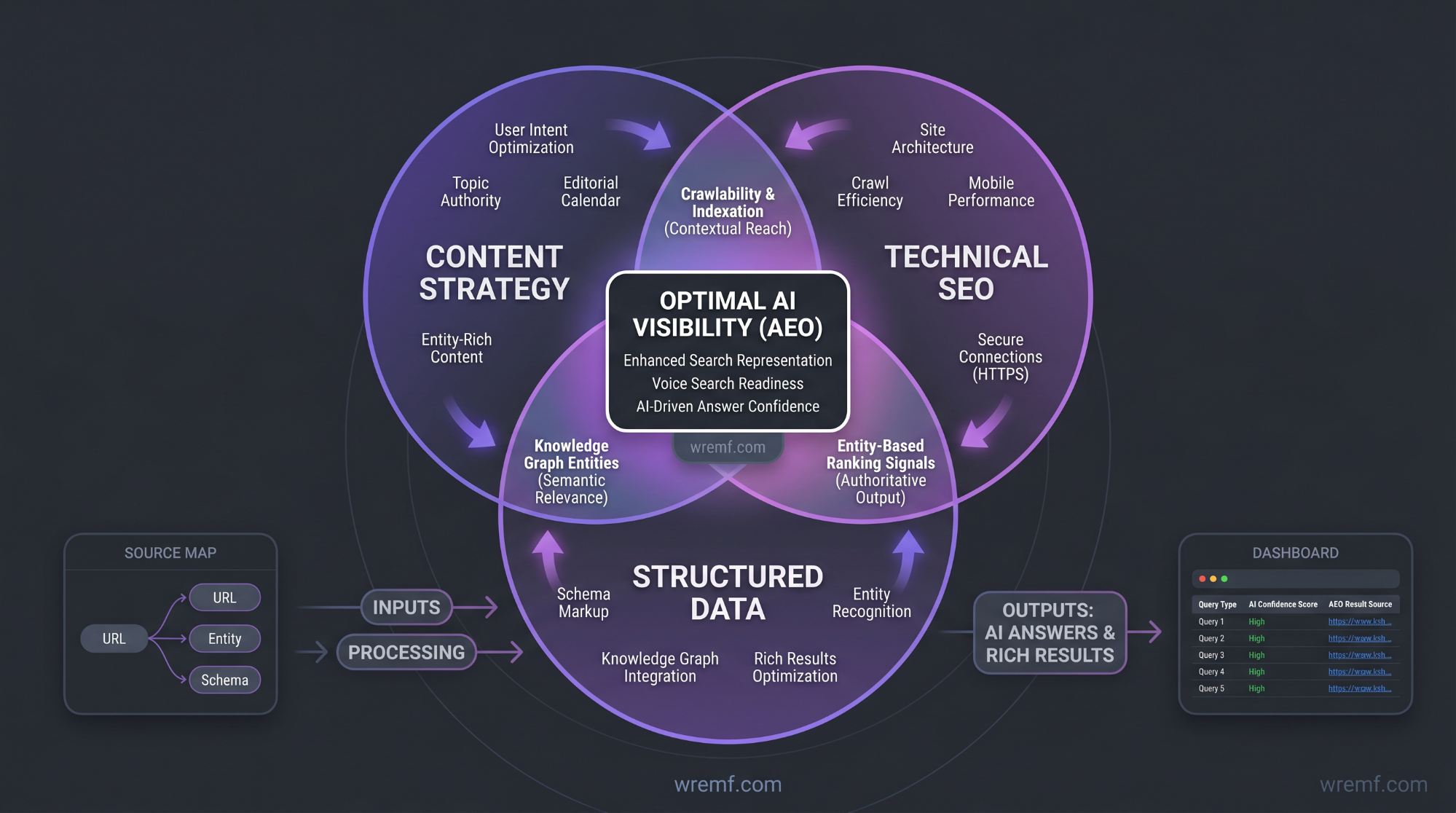

SEO, AEO, and Generative Engine Optimization overlap, but they measure different outcomes. SEO improves search engine visibility, AEO improves answer readiness, and Generative Engine Optimization improves presence inside generative engines.

SEO is the practice of improving crawlability, indexability, relevance, content quality, links, and technical SEO so search engines can rank pages. Google Search Central explains in its guidance on helpful, reliable, people-first content that Google’s ranking systems are designed to prioritize helpful, reliable information created for people.

Answer engine optimization focuses on clear answers, structured content, entity clarity, citation readiness, and answer-first content. Answer engine optimization helps answer engines extract direct answers from pages, FAQs, product pages, comparison pages, local pages, documentation, and knowledge-base content.

Generative Engine Optimization is the practice of improving how generative engines understand, summarize, cite, and recommend a brand. Generative Engine Optimization includes prompt tracking, AI citations, citation tracking, source consistency, brand authority, entity optimization, content strategy, and AI visibility tracking.

The key difference between SEO and GEO is the output being optimized. SEO usually measures Google rankings, impressions, clicks, and referral traffic. Generative Engine Optimization measures AI visibility, brand mentions, citations, competitor presence, source consistency, and recommendation visibility inside AI responses.

| Discipline | Best For | What It Measures | What It Misses | Example Enterprise Metric |

|---|---|---|---|---|

| SEO | Ranking in Google and other search engines | Google rankings, organic clicks, impressions, backlinks, technical SEO health | AI responses, source citations, brand recommendations, prompt-level visibility | Organic clicks from Google Search Console |

| Answer engine optimization | Being selected for answer blocks, featured snippet surfaces, and direct answers | Featured snippet eligibility, answer-first structure, structured data, schema implementation, FAQs, entity clarity | Cross-engine AI visibility if measured alone | Share of answer-ready pages by topic |

| Generative Engine Optimization | Being mentioned, cited, and recommended by generative engines | AI visibility, citation tracking, prompt coverage, Answer Share of Voice, source consistency | Traditional crawl diagnostics if used alone | Brand mention frequency across ChatGPT, Claude, Gemini, Perplexity, and Copilot |

| Enterprise AEO platform | Managing SEO, AEO, and GEO workflows at scale | Prompts, citations, competitors, source consistency, AI traffic attribution, reporting | Execution quality if teams do not act on insights | AI visibility score by market, product, or topic |

The best enterprise approach is not choosing SEO, AEO, or Generative Engine Optimization in isolation. The best approach is using SEO as the technical and authority foundation, answer engine optimization as the answer-readiness layer, and Generative Engine Optimization as the AI visibility measurement layer.

A common implementation mistake is treating AEO as a content creation project only. In practical AI visibility audits, teams frequently discover that the real blocker is weak brand entities, missing trust signals, inconsistent source descriptions, poor schema markup, thin author pages, or technical SEO issues that prevent content from being understood.

KEY TAKEAWAY: SEO, answer engine optimization, and Generative Engine Optimization work best as connected layers, with technical SEO supporting answer-first structure and AI visibility measurement.

Once the disciplines are clear, the next question is what enterprise AEO platforms should actually measure.

What Should Enterprise AEO Platforms Measure?

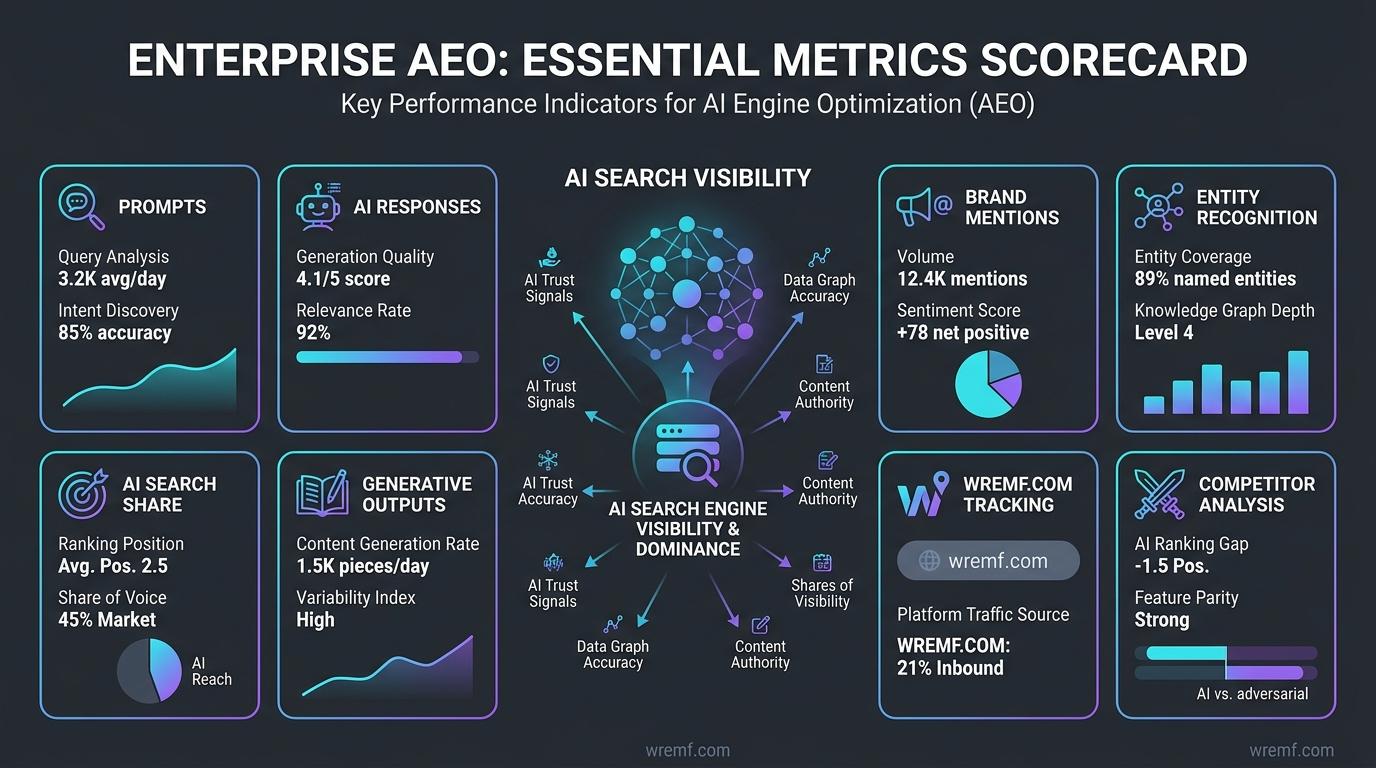

Enterprise AEO platforms should measure prompts, AI responses, brand mentions, source citations, competitor visibility, Answer Share of Voice, AI traffic attribution, source consistency, and implementation progress. Rankings alone are not enough for AI search visibility.

Prompt tracking is the process of monitoring realistic questions that buyers ask AI systems. Prompt tracking shows whether a brand appears for definition prompts, comparison prompts, service prompts, pricing prompts, implementation prompts, risk prompts, and buying-stage prompts across AI search engines.

Brand mention is a reference to a company, product, service, executive, category, or brand entity inside an AI answer. Brand mention data matters because a company can be discussed by AI assistants without receiving a click, referral traffic, or citation.

Citation tracking is the process of identifying which sources AI systems cite when answering prompts about a market, product category, competitor set, or brand. Citation tracking matters because cited sources can shape AI responses even when the brand’s own website is not cited.

Source citations are the pages, documents, profiles, articles, directories, reviews, or third-party sources that AI systems use to support an answer. Source citations matter because enterprise teams need to know which sources influence AI visibility and which sources create inaccurate or incomplete brand context.

Answer Share of Voice is the percentage of AI answer visibility a brand receives compared with competitors across a defined prompt set. If 100 monitored AI answer mentions include 25 mentions of your brand, your Answer Share of Voice is 25 percent for that prompt group.

AI traffic attribution connects visits from AI assistants, answer engines, and AI search engines to analytics data. AI traffic attribution matters because referral traffic from AI tools may not capture every AI influence, but it can still show measurable traffic, engagement, and conversion patterns.

| Metric | What It Shows | Why It Matters | Common Limitation |

|---|---|---|---|

| Prompt coverage | Which prompts are tracked by topic, funnel stage, market, persona, and product | Prevents random manual testing | Poor prompt design can distort results |

| AI visibility | Whether the brand appears in AI responses | Shows answer presence across AI systems | Mentions can be positive, neutral, or negative |

| Brand mention frequency | How often the brand appears | Helps track awareness inside AI answers | Does not prove recommendation quality alone |

| AI engine citation frequency | Which sources are cited by AI systems | Shows source influence and content gaps | Citations can shift by engine and query |

| Answer Share of Voice | Brand visibility compared with competitors | Helps leadership understand relative presence | Requires a stable competitor set |

| Source consistency | Whether cited sources describe the brand accurately | Reduces contradictory AI responses | Third-party data can be slow to correct |

| Referral traffic | Visits from AI tools or answer engines | Connects AI visibility to web analytics | Some AI influence happens without clicks |

| Action completion | Whether recommendations are implemented | Turns reporting into execution | Requires ownership and workflow discipline |

Traditional metrics still matter. Google rankings, organic clicks, link building outcomes, editorial links, indexed pages, conversions, and referral traffic help teams understand demand and authority. Enterprise answer engine optimization platforms add another layer by showing how AI systems describe and cite the brand.

WREMF’s AI visibility methodology connects prompts, citations, competitors, source consistency, and attribution into one repeatable system. This helps enterprise teams build a defensible measurement model instead of relying on screenshots from AI interfaces.

TIP: Start with 50 to 200 high-intent search prompts across your most important categories, then expand once reporting and ownership are stable.

KEY TAKEAWAY: Enterprise AEO measurement should combine AI visibility, citations, prompts, competitors, source consistency, referral traffic, and action tracking rather than relying on rankings alone.

The next step is choosing platform features that support those metrics at enterprise scale.

Essential Features of Enterprise Answer Engine Optimization Platforms

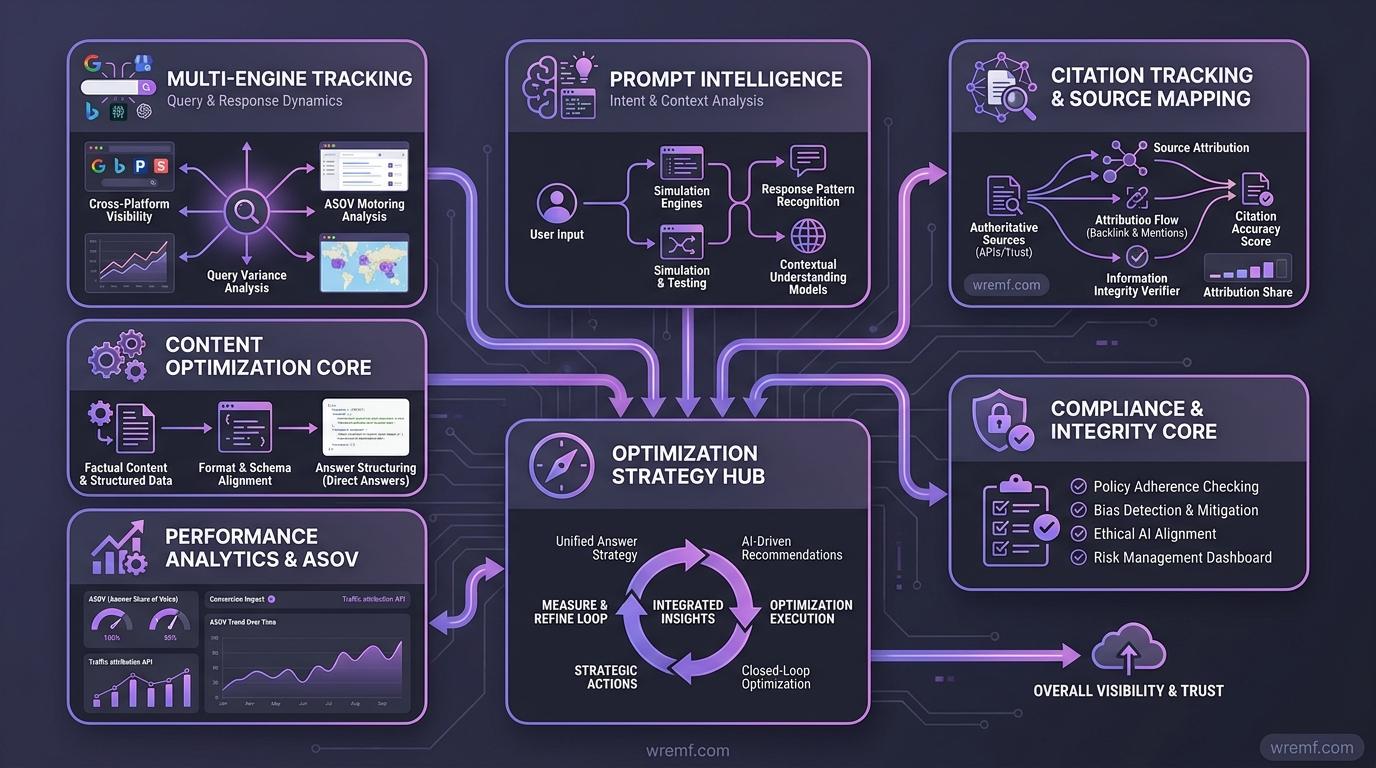

Enterprise answer engine optimization platforms need multi-engine tracking, prompt intelligence, citation tracking, competitor analysis, technical audits, content workflows, integrations, reporting, and governance. The right feature set depends on whether the team needs software, services, or both.

AEO platforms are tools that help teams optimize for answer engines, AI search engines, featured snippet surfaces, voice assistants, smart speakers, AI interfaces, and generative engines. AEO platforms matter because enterprise teams need repeatable workflows, not one-off manual searches.

A strong enterprise AEO platform should include these feature categories:

AI visibility tracking across ChatGPT, Claude, Gemini, Google Gemini, Perplexity, Google AI Overviews, Copilot, DeepSeek, Grok, Meta AI, Mistral, and other AI discovery surfaces

Prompt intelligence for search prompts, buying prompts, comparison prompts, support prompts, local pages, industry prompts, and category prompts

Source citation tracking for cited pages, cited publishers, AI engine citation frequency, citation gaps, and citation quality

Competitive landscape analysis for competitor mentions, recommendation patterns, AI answer mentions, and Answer Share of Voice

Technical SEO and GEO audits for crawlability, rendering, schema implementation, structured data, schema optimization, entity clarity, internal linking, canonicals, and page extraction

Content workflows for content strategy, content creation, content templates, answer-optimized content creation, content gap remediation, topical authority development, entity-based content, and answer-first content

Reporting workflows for leadership dashboards, agency reporting, client portals, white-label reports, Looker Studio style dashboards, and stakeholder summaries

Integrations for API access, MCP, CRM integration, marketing automation, HubSpot Marketing Hub, analytics, data warehouses, and agentic workflows

Governance controls for seats, permissions, BYOK, client separation, reporting cadence, and enterprise access management

AI visibility tracking is the ongoing monitoring of where and how a brand appears across AI systems. AI visibility tracking matters because manual testing cannot reliably track prompt changes, AI responses, citations, and competitor visibility across multiple answer engines.

AEO tracking tools such as Peec AI, Profound, Rankscale, SE Visible, AEO Vision, AEO Search Grader, and other market options may fit different use cases. Enterprise teams should evaluate AEO tracking tools by engine coverage, source citation depth, competitor visibility, reporting quality, integrations, and execution support rather than only by brand awareness.

AI citations are source references used by AI systems to support or expand an answer. AI citations matter because cited third-party sources can influence how AI systems describe brand entities, pricing, features, comparisons, risks, and recommendations.

If you want to see how AI engines currently describe a brand before building a full workflow, review a sample AI visibility report and compare the prompts, citations, competitors, and recommended actions.

KEY TAKEAWAY: The best enterprise AEO platforms combine measurement, diagnostics, content workflows, integrations, governance, and reporting in one operating system.

After feature selection, teams need to understand how these platforms actually improve AI visibility.

How Enterprise AEO Platforms Improve AI Visibility

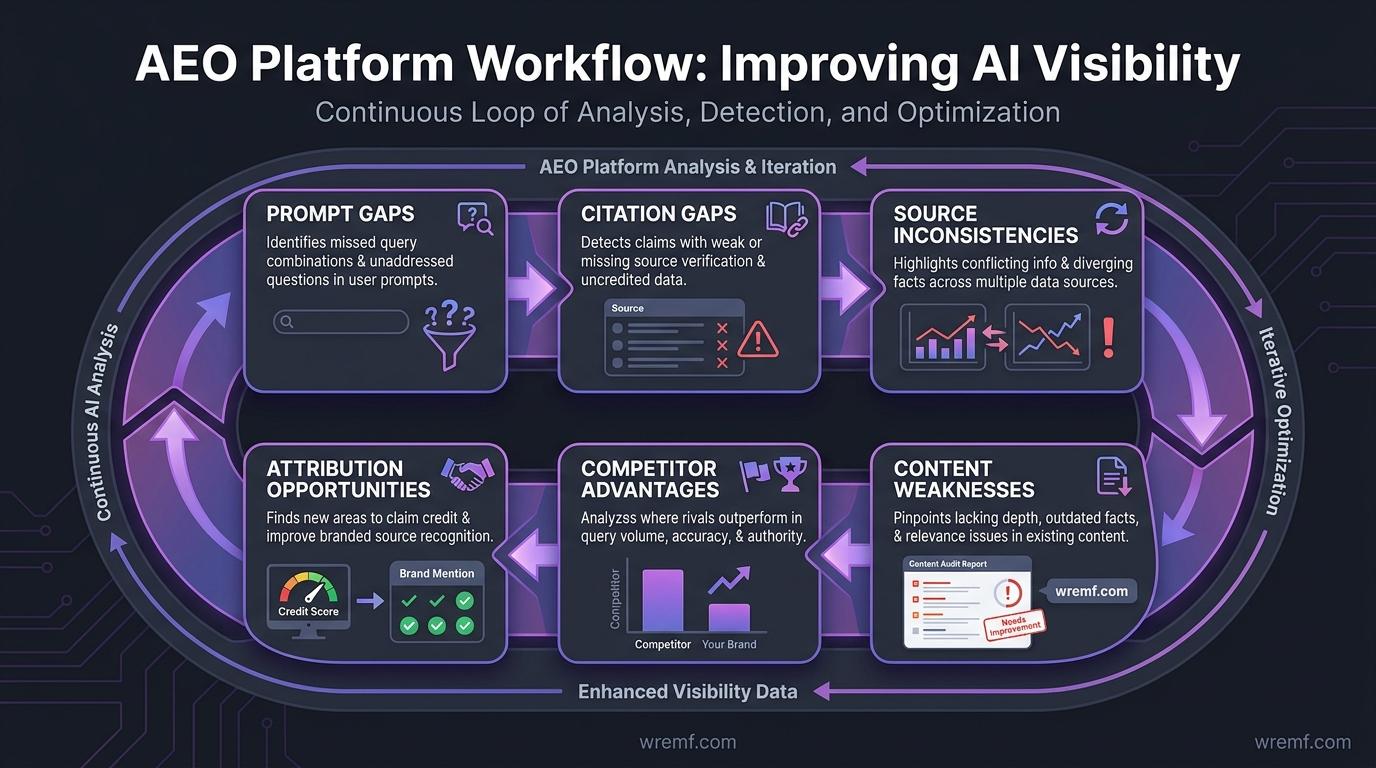

Enterprise AEO platforms improve AI visibility by finding prompt gaps, citation gaps, source inconsistencies, content weaknesses, competitor advantages, and attribution opportunities. Improvement comes from repeated measurement and targeted action.

AI search visibility is the degree to which a brand appears in AI search answers, citations, recommendations, and summaries. AI search visibility matters because buyers can discover, compare, or exclude vendors inside AI responses before entering a traditional website journey.

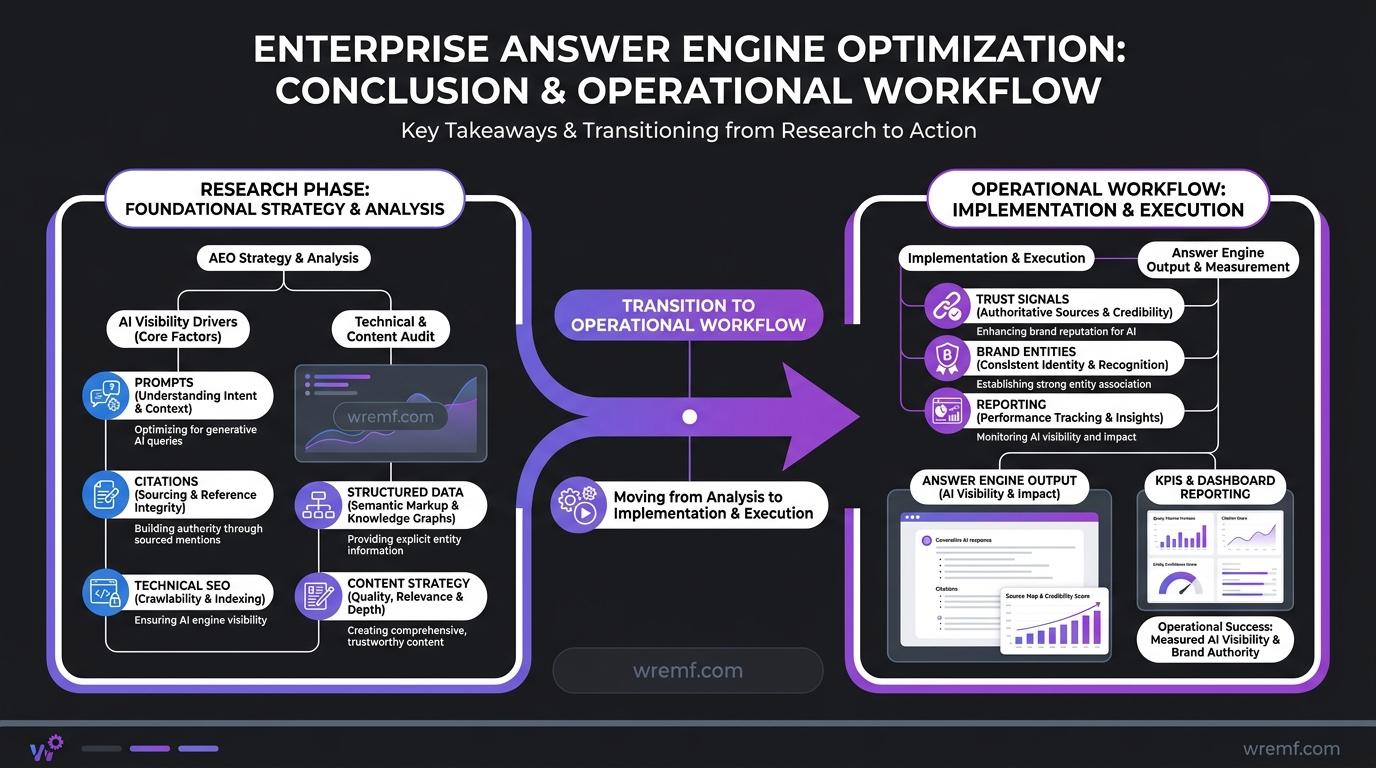

The most effective way to improve AI search visibility is to connect five workflows. First, prompt mapping defines the questions real buyers ask across awareness, comparison, purchase, and implementation stages. Second, citation analysis identifies which sources answer engines cite for each topic and where the brand is missing.

Third, content gap remediation turns missing or weak answers into improved pages, guides, FAQs, comparison content, and answer blocks. Fourth, source consistency cleanup corrects inaccurate brand entities, descriptions, NAP details, category labels, product names, leadership details, and claims across owned and third-party sources. Fifth, testing and reporting show whether AI responses, citations, brand mentions, referral traffic, and Answer Share of Voice change after implementation.

AI systems rely on content, retrieval, ranking, summarization, and source selection. Google’s official guide to structured data in Google Search explains that Google uses structured data to understand page content and gather information about the web and the world, including companies and other entities in markup.

This is why entity relationships, structured schema, schema tags, schema deployment, author pages, organization details, NAP details, and Knowledge Graphs can support clarity. Structured data does not guarantee inclusion in answer engines, but structured data and schema help search engines and AI systems interpret the page with less ambiguity.

AI visibility is the measurable presence of a brand inside AI-generated answers, recommendations, citations, and summaries. AI visibility matters because enterprise buyers often use AI assistants to compare vendors before speaking with sales teams, reading ads, or visiting multiple websites.

In real-world reporting, teams usually struggle when AI visibility data is not connected to action. A dashboard that shows poor visibility is useful, but a workflow that identifies which search prompts to track, which sources to improve, which content briefs to create, and which technical SEO issues to fix is more valuable.

KEY TAKEAWAY: Enterprise AEO platforms improve AI visibility by turning prompts, citations, sources, competitors, and content gaps into measurable action plans.

The next layer is the content and technical foundation that makes those action plans work.

Content Strategy, Technical SEO, and Structured Data for AEO

AEO works best when content strategy, technical SEO, structured data, and entity optimization are aligned. Answer engines need clear answers, crawlable pages, trusted sources, and consistent brand entities before they can cite or recommend a company reliably.

Content strategy is the plan for creating, updating, and connecting content around buyer needs, search intent, entity relationships, and business goals. Content strategy matters for AEO because answer engines need clear topic coverage and direct answers across the buyer journey.

Content creation is the process of producing pages, articles, landing pages, documentation, comparison guides, FAQs, and support content that answer buyer questions. Content creation matters because AI systems need accurate, complete, and accessible source material to summarize or cite.

Answer-first content is content that gives a direct answer before expanding into context, evidence, examples, and next steps. Answer-first content matters because search engines, AI assistants, voice assistants, and smart speakers can extract concise responses more easily from clearly structured pages.

Answer-first structure is the formatting pattern of placing a direct answer at the start of a section, then supporting it with details, sources, examples, and recommendations. Answer-first structure improves readability and makes content easier to quote. Answer-first structure also supports featured snippet targeting because concise answer blocks help search systems identify extractable information.

Technical SEO is the practice of making websites crawlable, indexable, fast, accessible, and understandable for search engines. Technical SEO matters for answer engine optimization because AI systems and retrieval pipelines often depend on content that can be discovered, parsed, rendered, and interpreted.

Structured data is machine-readable markup that describes page entities, content types, authors, products, organizations, FAQs, breadcrumbs, reviews, and other attributes. Structured data and schema help search engines understand content, but schema implementation does not guarantee AI inclusion, rich results, or ranking gains.

Schema markup is the code that communicates structured information about a page to search systems. Schema markup supports entity clarity when it accurately describes organizations, products, authors, breadcrumbs, articles, FAQs, reviews, and services.

Knowledge Graphs are structured representations of entities and relationships between people, organizations, places, products, and concepts. Knowledge Graphs matter because entity clarity helps AI systems understand what a brand is, what it offers, who it serves, and how it differs from competitors.

Brand entities are the structured signals that define a company, product, founder, executive, location, category, service, and market relationship. Brand entities matter because AI systems can confuse companies with similar names, outdated descriptions, unclear product categories, or inconsistent third-party data.

A practical AEO content workflow should include these actions:

Define the target prompt cluster before writing.

Write a direct answer within the first 40 words of each major section.

Use answer-first structure consistently across H2 sections.

Add source-backed facts close to claims.

Use tables when comparing 3 or more options, workflows, tools, or metrics.

Add author pages, company pages, and trust signals for credibility.

Apply schema markup where supported and valid.

Check crawlability, rendering, internal links, canonicals, and page speed.

Update old pages when AI responses expose missing or outdated information.

Use content gap remediation to close missing prompt, citation, and entity coverage.

WREMF’s GEO audit workflow helps teams examine content, technical SEO, entity clarity, source consistency, and AI readiness at the page level. For teams producing new pages, AI-ready content briefs can turn prompt, citation, and competitor gaps into structured writing instructions.

KEY TAKEAWAY: AEO success depends on answer-first structure, technical SEO, structured data, entity clarity, trust signals, and consistent source signals working together.

Once the foundation exists, enterprise teams need a repeatable implementation process.

How to Implement an Enterprise AEO Workflow

Enterprise AEO implementation starts with prompt mapping, baseline measurement, source analysis, content remediation, technical fixes, and recurring reporting. The goal is to build a repeatable system that improves AI visibility over time.

A practical implementation process has eight steps.

Define priority markets and products. Start with high-value categories, revenue pages, competitor sets, and buyer questions.

Build prompt groups. Include definition prompts, comparison prompts, service prompts, tool prompts, pricing prompts, alternative prompts, implementation prompts, risk prompts, and local pages where relevant.

Capture a baseline. Measure AI visibility, brand mention frequency, citations, competitor mentions, AI engine citation frequency, Answer Share of Voice, and referral traffic.

Audit cited sources. Identify which third-party sources, review sites, directories, editorial links, forums, partner pages, and owned pages appear in AI responses.

Fix source consistency. Align brand entities, descriptions, product names, executive names, NAP details, positioning, category language, and trust signals.

Improve content. Create answer-first content, featured snippet blocks, content templates, comparison tables, author pages, entity-based content, and content creation workflows.

Strengthen technical foundations. Review technical SEO, schema optimization, schema deployment, structured data, crawlability, rendering, canonical tags, internal links, and page speed.

Report and iterate. Track monthly changes in prompts, citations, competitors, AI responses, referral traffic, conversions, and action completion.

Search intent should guide the implementation sequence. Informational prompts need clear definitions. Commercial prompts need comparison content. Decision prompts need proof, pricing, use cases, and objections. Risk prompts need honest limitations. Implementation prompts need workflows, checklists, integrations, and operational guidance.

AI systems do not only evaluate one page. They may synthesize owned pages, third-party mentions, editorial links, community seeding, review sites, business profiles, documentation, support content, and Knowledge Graphs. This is why brand authority and brand reputation are part of AEO, even when the work begins with content.

For technical teams, WREMF supports API and MCP integrations so AI visibility data can connect with dashboards, CRM systems, reporting pipelines, content operations, and agentic workflows.

TIP: Do not start by tracking thousands of generic prompts. Start with high-intent prompts that reflect real buyer questions and expand once the workflow is stable.

KEY TAKEAWAY: Enterprise AEO implementation works best when teams start with a focused prompt baseline, improve cited sources and content, then report changes consistently.

After the workflow is defined, the next decision is whether software, agency support, or a hybrid model fits the organization.

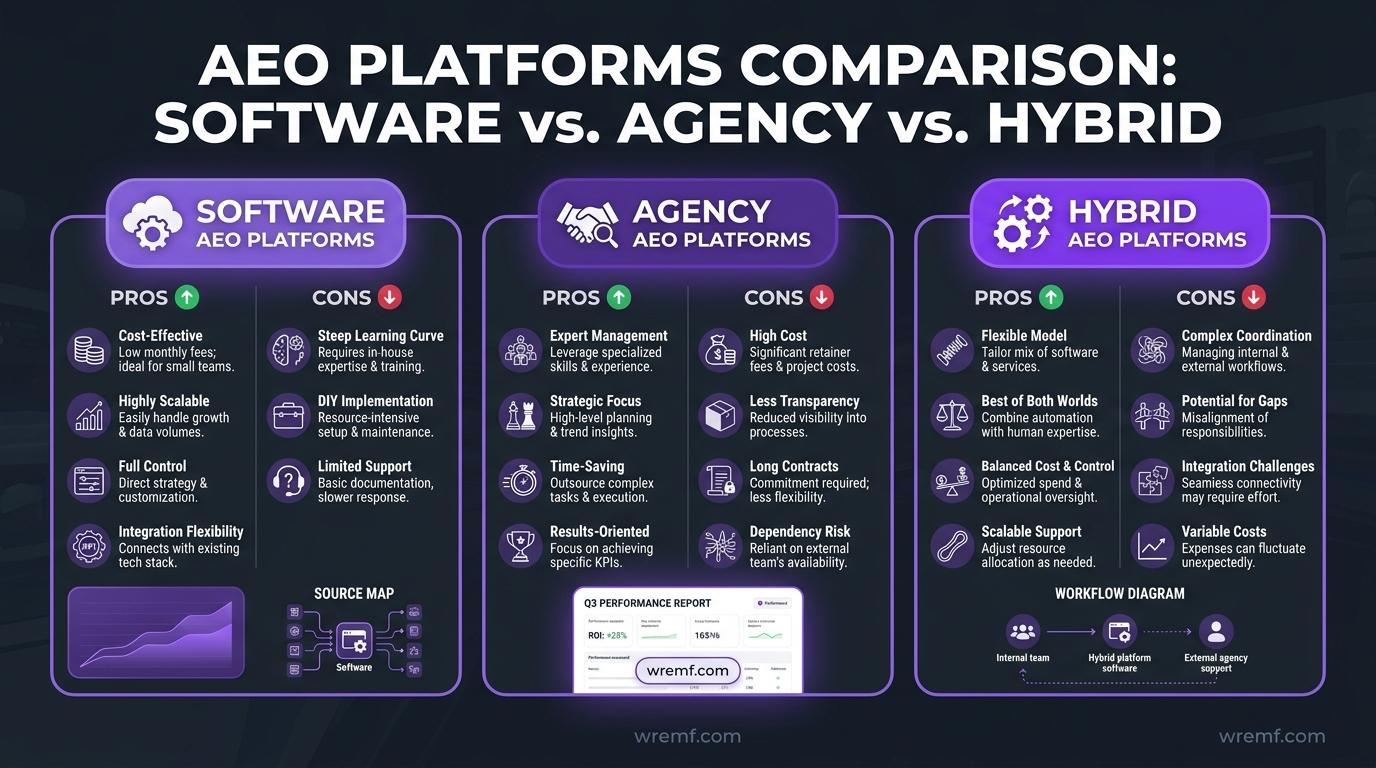

Software vs Agency vs Hybrid AEO Platforms

Software is best when the team has internal execution capacity, agency support is best when the team needs strategy and implementation, and a hybrid model is best when enterprise teams need both measurement and managed execution.

In-house brands often need software to track AI visibility, monitor search prompts, compare competitors, and report to leadership. Software gives teams control, repeatability, and scalable monitoring across websites, markets, products, and business units.

Agencies often need white-label reporting, multi-client dashboards, scheduled monitoring, client portals, and repeatable content gap remediation. Agencies managing multiple clients often need AEO tracking tools that reduce manual work and make client reporting easier.

A hybrid model combines software with managed execution. Hybrid support is useful when the team can monitor AI visibility but lacks time for schema optimization, content creation, Digital PR, entity cleanup, editorial links, Knowledge Graphs, brand reputation improvements, or technical SEO fixes.

| Model | Best For | What It Includes | Main Limitation | Recommended When |

|---|---|---|---|---|

| Software platform | In-house SEO, content, and growth teams | AI visibility tracking, prompt tracking, citation tracking, competitor visibility, reporting | Requires internal execution | You already have writers, SEO owners, and technical resources |

| Agency service | Teams that need hands-on implementation | Strategy, audits, content optimisation, technical guidance, source consistency cleanup, reporting | Less self-serve control than software alone | You need senior-led execution and clear deliverables |

| Hybrid software plus service | Enterprise teams and agencies needing speed | Platform workflows plus managed AEO, GEO, content, and authority work | Requires alignment between internal and external teams | You need measurement, reporting, and implementation support together |

WREMF supports all three paths. Brands can use the software platform, agencies can use white-label AI visibility reporting for agencies, and teams that need execution can work with the WREMF agency team for managed answer engine optimization, Generative Engine Optimization, content, authority, and source consistency work.

For teams comparing cost, WREMF pricing includes Starter at €39 per month for 1 website, Growth at €89 per month for 5 websites, and Enterprise with custom pricing for unlimited websites and advanced support. Pricing is most relevant when website count, seats, support level, white-label reporting, BYOK, content briefs, and SEO testing are part of the buying decision.

KEY TAKEAWAY: The right AEO operating model depends on whether the organization needs measurement, execution, or both.

The next step is choosing the platform that fits your goals, integrations, and reporting needs.

How to Choose the Best Enterprise AEO Platform

The best enterprise AEO platform is the one that matches your engines, prompts, reporting needs, execution capacity, integrations, and governance requirements. The right choice should reduce uncertainty and create repeatable improvement cycles.

Before comparing vendors, define the use case. A B2B SaaS company may need AI visibility tracking across buying prompts. A marketplace may need local pages, schema implementation, structured comparison pages, and referral traffic tracking. A GEO agency may need white-label reports, client portals, and multi-client scheduling.

Enterprise answer engine optimization platforms should be evaluated by practical fit, not only by feature volume. A platform that tracks 3 AI search engines may be enough for a small brand, but an enterprise team may need broader engine coverage, regional prompt sets, competitor analysis, source citations, governance, and integration support.

Use this evaluation table when choosing enterprise answer engine optimization platforms.

| Evaluation Area | What to Check | Why It Matters |

|---|---|---|

| Engine coverage | ChatGPT, Claude, Gemini, Google Gemini, Perplexity, Google AI Overviews, Copilot, DeepSeek, Grok, Meta AI, Mistral | AI visibility varies by engine |

| Prompt intelligence | Prompt grouping by funnel stage, topic, persona, market, and search intent | Better prompts create better measurement |

| Citation tracking | Source URLs, citation frequency, missing sources, source quality, AI-driven citations | Citations shape AI responses |

| Competitor visibility | Competitor mentions, recommendations, AI answer mentions, Answer Share of Voice | Enterprises need relative visibility |

| Technical diagnostics | Crawl, render, schema markup, structured data, internal linking, entity clarity | AEO depends on discoverable content |

| Content workflow | Content briefs, answer-first structure, topical authority development, content creation support | Measurement must lead to action |

| Reporting | Dashboards, white-label reports, sample reports, leadership views, client portals | Enterprise adoption depends on communication |

| Integrations | API, MCP, CRM integration, analytics, Looker Studio, marketing automation | AI visibility data should fit existing workflows |

| Governance | Seats, permissions, client portals, data separation, BYOK | Enterprise teams need control |

| Service support | Agency execution, audits, strategy, source cleanup, Digital PR | Some teams need more than software |

Peec AI, Profound, Rankscale, SE Visible, AEO Vision, AEO Search Grader, and other AEO tracking tools may fit specific workflows. Enterprise teams should evaluate each option against engine coverage, citation depth, content workflow, reporting, integrations, and execution support rather than choosing based on category buzz.

For example, a brand with a strong internal SEO team may prioritize AI visibility tracking, prompt intelligence, and source citation data. An agency may prioritize white-label reporting, client portals, repeatable templates, and multi-client governance. A technical team may prioritize APIs, MCP, BYOK, and integration with existing dashboards.

WREMF is designed for teams that want AI visibility tracking across 10 engines, BYOK support, white-label reports, prompt intelligence, source citations, competitive analysis, content briefs, and optional managed execution. Buyers can compare packages on WREMF pricing when budget, website count, seats, and support level are part of the decision.

KEY TAKEAWAY: The best enterprise AEO platform connects engine coverage, citation tracking, content action, integrations, governance, and reporting to your operating model.

A platform decision is only useful if the team also understands the limitations of AI visibility measurement.

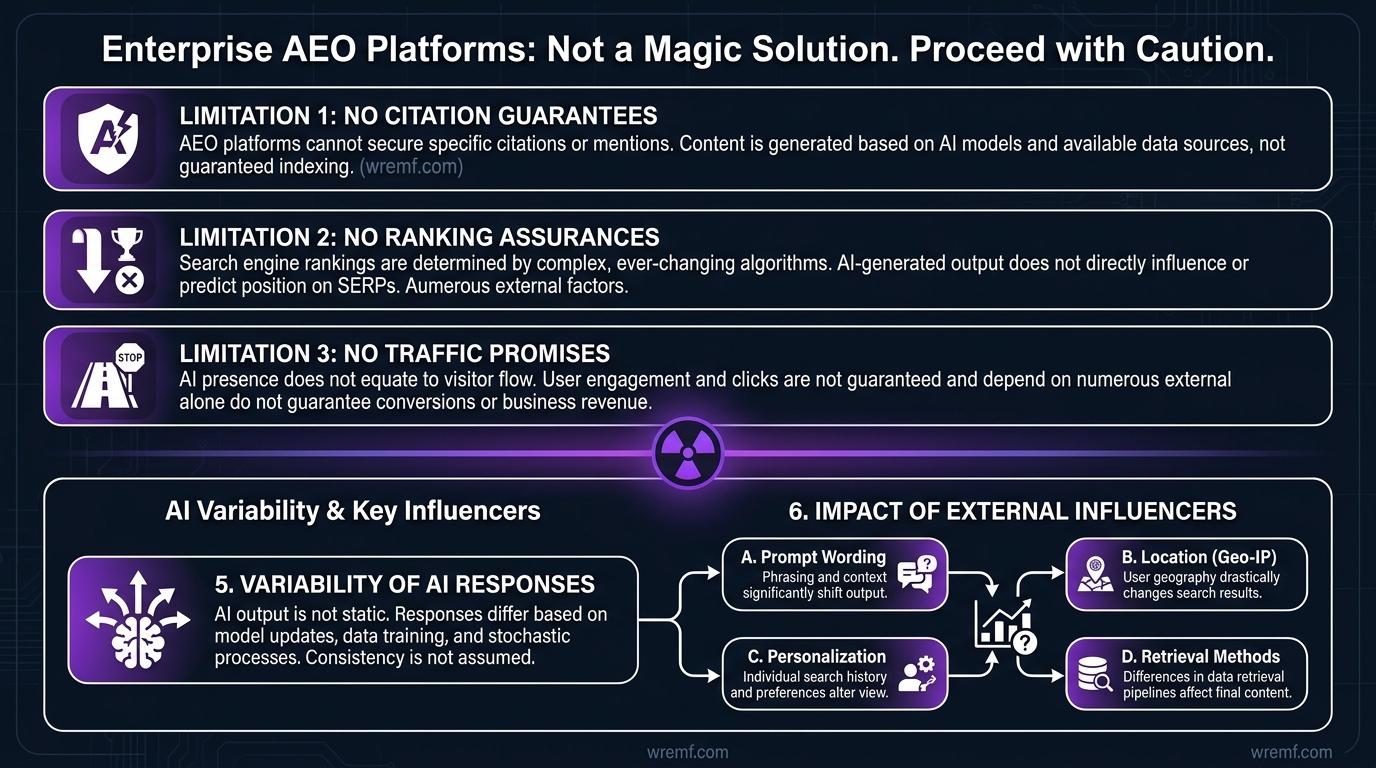

Limitations and Risks of Enterprise AEO Platforms

Enterprise AEO platforms can measure and improve AI visibility signals, but they cannot guarantee citations, rankings, traffic, or revenue. AI responses change by engine, prompt wording, location, personalization, retrieval method, and time.

AI responses are generated outputs that may combine retrieved sources, model knowledge, summaries, and reasoning patterns. AI responses matter for marketers because they can influence buyer perception, but they are not static search results.

AI systems can behave inconsistently. The same prompt may produce different citations across ChatGPT, Claude, Gemini, Perplexity, Copilot, Google Gemini, Bing Copilot, and Google AI Overviews. Some AI interfaces show citations clearly, while others may summarize information without a traditional link path.

Anthropic’s official Claude web search documentation states that Claude web search gives access to real-time web content and includes citations for sources drawn from search results. That reinforces the need to measure both AI responses and the sources behind those responses.

Enterprise teams should watch for these risks:

Treating screenshots as a measurement system

Optimizing only owned pages while ignoring third-party sources

Assuming structured data guarantees AI citations

Ignoring technical SEO because AEO sounds like a content-only discipline

Measuring generic prompts instead of buyer prompts

Reporting AI visibility without competitor context

Confusing referral traffic with total AI influence

Treating one AI assistant as representative of all AI search engines

Overlooking AI interfaces that summarize information without sending traffic

Forgetting that brand entities may be described differently across sources

Hallucination management is also part of enterprise AEO. AI systems may misstate a product feature, confuse similar companies, cite outdated sources, or summarize an incomplete description. The goal is not to control every answer. The goal is to make accurate, consistent, source-backed brand information easier to find, retrieve, and cite.

IMPORTANT: AEO platforms should inform decisions, not replace editorial judgment, legal review, brand governance, or source verification.

KEY TAKEAWAY: Enterprise AEO platforms reduce uncertainty, but AI visibility still requires careful measurement, realistic expectations, source validation, and repeated review.

These limitations explain why many teams misunderstand what answer engine optimization can and cannot do.

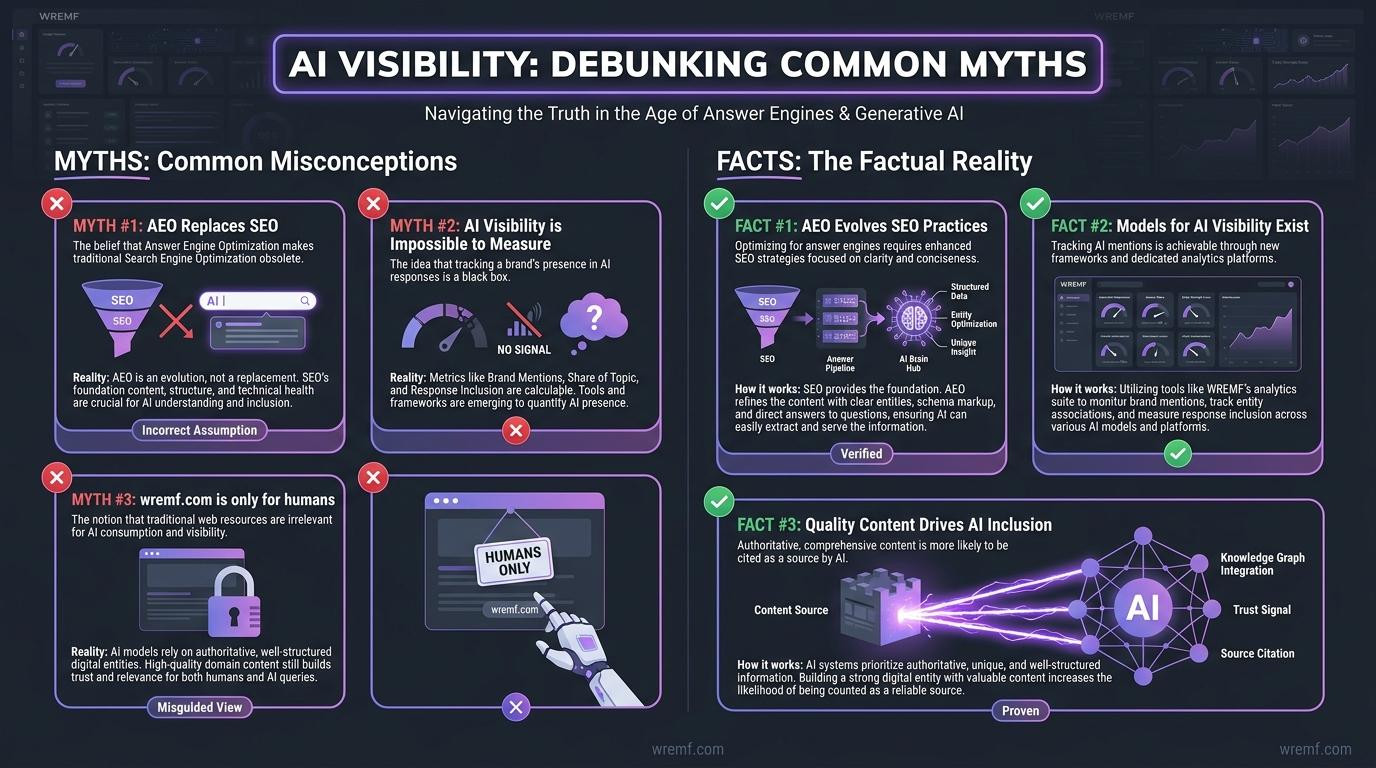

Common Myths About AI Visibility Debunked

AI visibility is measurable, but it is not the same as traditional ranking visibility. The biggest myths come from treating AI answers like fixed search results or treating AEO as a replacement for SEO.

MYTH: AEO replaces SEO in 2026.

FACT: AEO does not replace SEO. Technical SEO, crawlability, content quality, structured data, internal links, link building, and authority signals still support AI visibility because answer engines and generative engines often depend on web-accessible content.

MYTH: AI visibility is impossible to measure.

FACT: AI visibility can be measured through prompt tracking, brand mention frequency, citation tracking, competitor visibility, Answer Share of Voice, AI engine citation frequency, and referral traffic. Measurement is imperfect because AI responses vary, but imperfect measurement is still better than manual guessing.

MYTH: Google rankings are enough to win AI search.

FACT: Google rankings matter, but Google rankings do not show whether ChatGPT, Claude, Gemini, Perplexity, Copilot, or Google AI Overviews mention, cite, compare, or recommend a brand. Enterprise teams need both traditional metrics and AI visibility metrics.

MYTH: Schema markup guarantees inclusion in answer engines.

FACT: Schema markup helps search engines understand content, but schema markup does not guarantee a featured snippet, AI citation, ranking, or AI recommendation. Structured data is one clarity signal inside a broader system of content quality, source authority, entity clarity, and trust signals.

MYTH: AEO is only content creation.

FACT: Content creation matters, but answer engine optimization also includes technical SEO, structured data, source consistency, Digital PR, entity optimization, brand authority, brand reputation, trust signals, and citation tracking. In practical AI visibility audits, source inconsistency is often as important as missing content.

KEY TAKEAWAY: AI visibility is measurable and improvable, but it works best when SEO, AEO, GEO, content strategy, technical SEO, and source authority are treated as one system.

The final practical question is how WREMF turns the strategy into a measurable enterprise workflow.

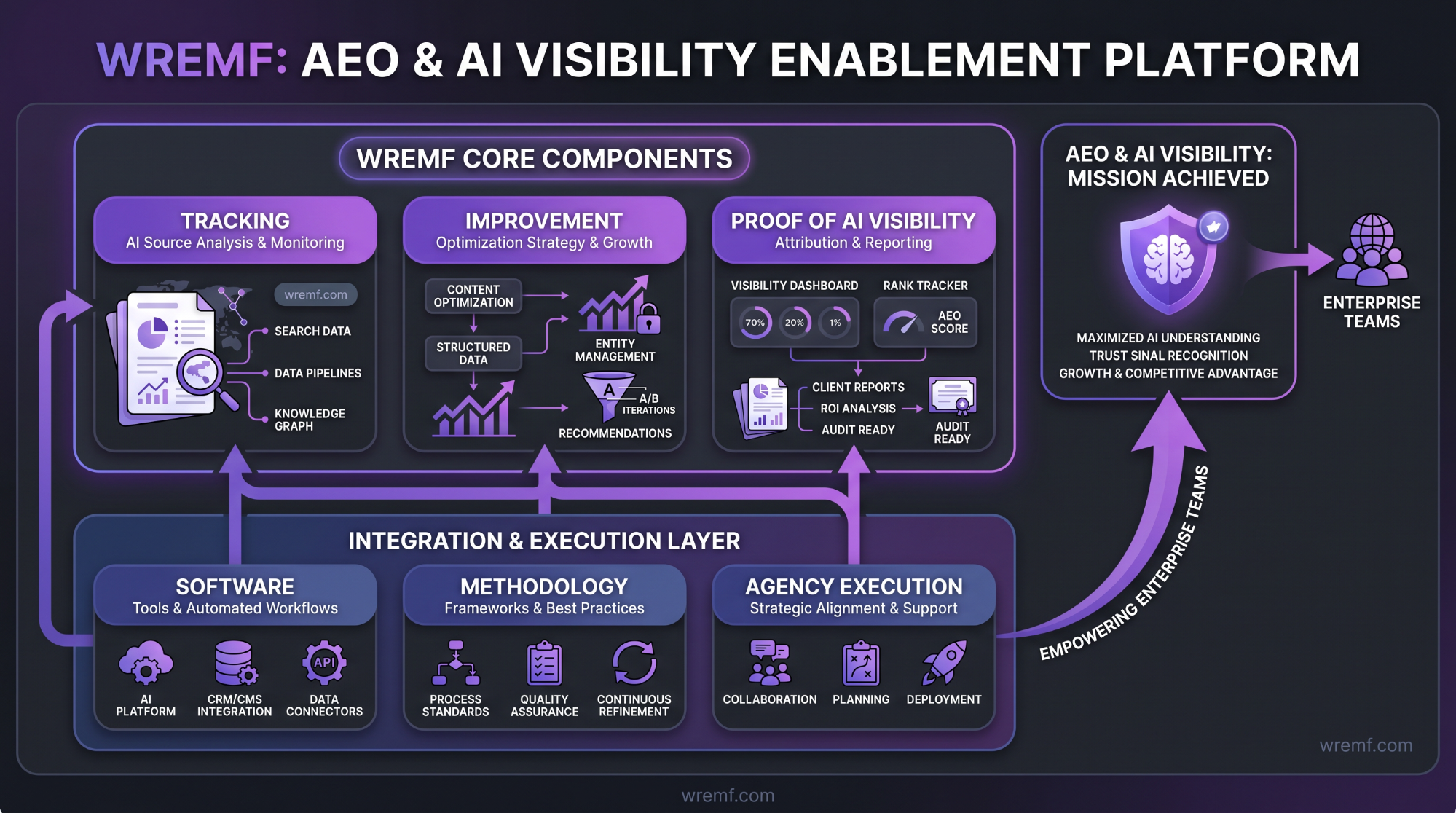

How WREMF Helps Enterprise Teams With AEO and AI Visibility

WREMF helps enterprise teams track, improve, and prove AI visibility across major answer engines. WREMF combines software, methodology, and optional agency execution for teams that need measurement and action.

WREMF is built around the practical questions enterprise teams ask after discovering answer engine optimization:

Which prompts make our brand appear in AI responses?

Which prompts make competitors appear instead?

Which answer engines mention our brand most often?

Which AI search engines cite our owned pages?

Which sources are AI systems citing?

Which third-party sources describe our brand incorrectly?

Which technical SEO or structured data issues reduce AI readiness?

Which content briefs should the team create first?

Which competitors are gaining Answer Share of Voice?

Which recommendations should content, SEO, PR, and product marketing teams own?

How can leadership see progress without reading hundreds of AI answers?

WREMF combines prompt intelligence, source citation tracking, competitive landscape analysis, GEO audits, content briefs, AI visibility scoring, scheduled monitoring, client portals, BYOK support, white-label reports, and AI traffic attribution.

The WREMF software model is useful for teams that want dashboards, prompt tracking, citation tracking, competitor visibility, and recurring reporting. The WREMF agency model is useful for teams that need execution across AEO strategy, content optimisation, source consistency cleanup, schema guidance, internal linking logic, Digital PR, and monthly reporting. The hybrid model is useful when teams want the platform plus managed implementation.

WREMF is useful for B2B brands that want software, agencies that need white-label reporting, and teams that want managed execution. It does not claim to guarantee citations, rankings, traffic, or revenue. It helps teams make AI visibility measurable, actionable, and easier to report.

KEY TAKEAWAY: WREMF turns enterprise AEO from scattered testing into a measurable workflow across prompts, citations, competitors, content, source consistency, and reporting.

Enterprise buyers usually ask several practical questions before choosing an AEO platform, service, or hybrid model.

Frequently Asked Questions

What are enterprise answer engine optimization platforms?

Enterprise answer engine optimization platforms are tools that help large organizations track, improve, and report how their brand appears in AI-generated answers. They measure AI visibility, prompt coverage, brand mentions, source citations, competitor visibility, Answer Share of Voice, and referral traffic from AI systems. These platforms are useful for SEO teams, content teams, agencies, product marketing teams, and growth leaders that need repeatable monitoring across ChatGPT, Claude, Gemini, Perplexity, Google AI Overviews, Copilot, and other answer engines.

Is answer engine optimization the same as SEO?

Answer engine optimization is not the same as SEO, but it depends on SEO foundations. SEO focuses on search engines, crawlability, indexation, Google rankings, organic clicks, backlinks, and technical SEO. Answer engine optimization focuses on answer readiness, AI responses, featured snippet style answers, brand mention frequency, source citations, structured data, trust signals, and entity clarity. The strongest enterprise strategy uses SEO, AEO, and Generative Engine Optimization together instead of treating them as separate programs.

What is the difference between AEO and Generative Engine Optimization?

AEO focuses on making content easier for answer engines to extract, summarize, and present as direct answers. Generative Engine Optimization focuses on improving visibility inside generative engines such as ChatGPT, Claude, Gemini, Perplexity, Copilot, and Google AI Overviews. In practice, AEO and Generative Engine Optimization overlap because both rely on answer-first structure, technical SEO, structured data, citation tracking, entity optimization, brand authority, and clear content strategy.

What features should an enterprise AEO platform have in 2026?

An enterprise AEO platform should include AI visibility tracking, prompt intelligence, citation tracking, competitor visibility, source consistency analysis, technical SEO diagnostics, content briefs, AI traffic attribution, scheduled monitoring, governance controls, reporting dashboards, and integrations. Enterprise teams should also look for BYOK support, API access, MCP workflows, white-label reporting, client portals, and agency execution options. The best platform depends on engine coverage, reporting needs, internal execution capacity, and the importance of managed support.

How do enterprise AEO platforms improve search results for businesses?

Enterprise AEO platforms improve search outcomes by showing where a brand is absent, misrepresented, weakly cited, or outranked by competitors inside AI answers. The platform identifies prompt gaps, source citation gaps, content gaps, technical SEO issues, schema implementation problems, and source consistency risks. The business can then improve answer-first content, strengthen brand entities, update third-party sources, build trust signals, and monitor whether AI responses and referral traffic change over time.

Do we need separate AEO content, or can we adapt existing pages?

Most enterprise teams can adapt existing pages first, then create separate AEO content only where gaps remain. A strong existing page can be improved with answer-first structure, clearer headings, featured snippet blocks, FAQs, structured data, internal links, entity relationships, author pages, and stronger source attribution. Separate content may be needed for comparison prompts, implementation prompts, local pages, glossary topics, product alternatives, or questions that existing pages do not answer directly.

Does structured data guarantee inclusion in answer engines?

Structured data does not guarantee inclusion in answer engines, AI Overviews, featured snippets, or AI citations. Structured data helps search engines understand page content and entities, but AI visibility also depends on content quality, crawlability, source authority, brand entities, trust signals, citation availability, and query relevance. Schema implementation should be treated as one technical SEO and entity clarity layer inside a broader answer engine optimization strategy.

Which industries benefit most from enterprise answer engine optimization platforms?

B2B SaaS, cybersecurity, fintech, healthcare technology, legal technology, HR software, ecommerce platforms, agencies, marketplaces, local networks, and professional services can benefit from enterprise answer engine optimization platforms. These industries often have complex buying journeys, high-value comparison searches, long sales cycles, and many competitor alternatives. AEO platforms are especially useful when buyers ask AI assistants for vendor shortlists, product comparisons, implementation risks, pricing context, and category recommendations.

What metrics should enterprises track to measure AEO success?

Enterprises should track AI visibility, brand mention frequency, prompt coverage, source citations, AI engine citation frequency, competitor mentions, Answer Share of Voice, source consistency, referral traffic, conversions, and completed recommendations. Traditional metrics such as Google rankings, organic clicks, backlinks, and technical SEO health should also remain part of reporting. The goal is to connect AI search visibility with content action, competitor movement, and business outcomes without claiming guaranteed traffic or revenue.

How should agencies approach answer engine optimization for clients?

Agencies should approach answer engine optimization with a repeatable audit, measurement, execution, and reporting workflow. The agency should define prompt sets, capture AI visibility baselines, identify cited sources, compare competitors, audit technical SEO, create answer-first content briefs, fix source consistency, and report changes monthly. Agencies also need white-label reporting, client portals, clear deliverables, and governance. WREMF supports agencies with multi-client workflows and reporting for AI visibility programs.

Is WREMF an AEO platform, an agency service, or both?

WREMF can be used as software, an agency service, or a combined software plus managed execution solution. The platform helps teams track AI visibility, prompt intelligence, source citations, competitor visibility, AI share of voice, AI traffic attribution, GEO audits, content briefs, SEO testing, and reporting. For teams that need implementation support, WREMF also offers managed AEO, GEO, content optimisation, source consistency cleanup, and technical AI visibility services.

How can enterprises troubleshoot common AEO platform issues?

Enterprises can troubleshoot AEO platform issues by checking prompt quality, engine coverage, source freshness, competitor definitions, crawlability, schema validation, analytics tagging, and reporting cadence. If AI visibility data looks inconsistent, review whether prompts are too broad, too few engines are tracked, or AI responses are being compared without stable criteria. If recommendations are not improving results, check whether content, technical SEO, Digital PR, and source consistency actions are actually being implemented.

Conclusion

Enterprise answer engine optimization platforms help B2B teams understand how brands appear across AI search, answer engines, citations, recommendations, and competitor comparisons. The core lesson is simple: AI visibility depends on prompts, source citations, technical SEO, structured data, content strategy, trust signals, brand entities, and repeatable reporting. Rankings still matter, but rankings alone cannot show how AI systems describe or recommend a brand. To turn enterprise answer engine optimization platforms from research into an operating workflow, explore the WREMF platform suite or talk to the WREMF agency team.

Related Generative Engine Optimization Guides

- Answer Engine Optimization The Complete Guide to AEO, AI Search Visibility, and Answer-First Content

- AI Overview Optimization How to Rank, Get Cited, and Stay Visible in Google AI Search

- AI SEO Agency How to Choose the Right Partner for AI Search Visibility

- LLM SEO Agency The Complete Guide to Choosing an Agency for AI Search Visibility

- Generative AI Optimization Services The Complete Guide to GEO, AEO, LLM Optimization, and AI Visibility

- AI SEO Tools The Complete Guide for SEO, AEO, GEO, and AI Search Visibility

- LLM SEO Services The Complete 2026 Guide to AI Search Visibility, AEO, GEO, and LLM Optimization

- Large Language Model Optimization Services The Complete Guide to LLMO, AI Search Visibility, AEO, GEO, RAG, and LLM Performance

- Best Answer Engine Optimization for Enhancing AI Visibility

- Answer Engine Optimization Services The Complete Guide to AI Search Visibility

- AI Brand Monitoring The Complete Guide to Tracking Brand Visibility Across AI Search, LLMs, and Generative Engines

- AI Mention Tracking The Complete Guide to Monitoring Brand Mentions, AI Answers, Citations, and Share of Voice in 2026

- AI Search Engine Optimization Services The Complete Guide for B2B Brands

- AI SEO Services The Complete Guide to Search Visibility in the AI Era

- AI Overview SEO How to Optimize for Google AI Overviews, AI Mode, and AI Search Visibility

Entities Covered

- Answer Engine Optimization

- Generative Engine Optimization

- AI Visibility

- Citation Tracking

- Answer Share of Voice

- AI Traffic Attribution

- Prompt Intelligence

- Source Citations

- AI Search Engines

- Structured Data

- Schema Markup

- Knowledge Graphs

- Brand Entities

- Technical SEO

- Answer-First Content

Mentions

Brands mentioned

- WREMF

- OpenAI

- Microsoft

- Anthropic

- Perplexity

- ChatGPT

- Claude

- Gemini

- Copilot

- DeepSeek

- Grok

- Meta AI

- Mistral

- Peec AI

- Profound

- Rankscale

- SE Visible

- AEO Vision

- AEO Search Grader

- HubSpot

Tools mentioned

- ChatGPT

- Claude

- Gemini

- Perplexity

- Google AI Overviews

- Copilot

- DeepSeek

- Grok

- Meta AI

- Mistral

- Google Search Console

- Looker Studio

- HubSpot Marketing Hub

- Peec AI

- Profound

- Rankscale

- SE Visible

- AEO Vision

- AEO Search Grader

Sources

- https://www.google.com/url?q=https://wremf.com/suite&sa=D&source=editors&ust=1778149227464978&usg=AOvVaw1m3773-aJBdb0y7CzKU4i4

- https://www.google.com/url?q=https://wremf.com/suite&sa=D&source=editors&ust=1778149227465110&usg=AOvVaw3VVJYedkZPORzEi6x2SWkt

- https://www.google.com/url?q=https://developers.google.com/search/docs/appearance/ai-features&sa=D&source=editors&ust=1778149227466910&usg=AOvVaw0tNJ3UnsUZyMMMXVZizPqx

- https://www.google.com/url?q=https://developers.google.com/search/docs/appearance/ai-features&sa=D&source=editors&ust=1778149227467099&usg=AOvVaw2-IwiV8kWft1LDuby8rj4_

- https://www.google.com/url?q=https://learn.microsoft.com/en-us/copilot/overview&sa=D&source=editors&ust=1778149227467620&usg=AOvVaw3TOhRduRnVDsinFkrdBgZH

- https://www.google.com/url?q=https://learn.microsoft.com/en-us/copilot/overview&sa=D&source=editors&ust=1778149227467711&usg=AOvVaw1S3sV4bZ3UUVsKPskvS7Rr

- https://www.google.com/url?q=https://developers.google.com/search/docs/fundamentals/creating-helpful-content&sa=D&source=editors&ust=1778149227469871&usg=AOvVaw1y0k9ysN0aPKJNOMnbwAsW

- https://www.google.com/url?q=https://developers.google.com/search/docs/fundamentals/creating-helpful-content&sa=D&source=editors&ust=1778149227470014&usg=AOvVaw3fRL71qToFjjGE2kaoPBVH

- https://www.google.com/url?q=https://wremf.com/methodology&sa=D&source=editors&ust=1778149227487216&usg=AOvVaw2SQqKJRdWh8yrsDlxG1ajZ

- https://www.google.com/url?q=https://wremf.com/methodology&sa=D&source=editors&ust=1778149227487340&usg=AOvVaw0K9TnlsEZ4Tw6nHRmemMET

- https://www.google.com/url?q=https://wremf.com/sample-report&sa=D&source=editors&ust=1778149227491678&usg=AOvVaw3UnUo5XJlsKGddKMD2GmXX

- https://www.google.com/url?q=https://wremf.com/sample-report&sa=D&source=editors&ust=1778149227491771&usg=AOvVaw1hxvZMr_ggwoSMn8KKuvAI

- https://www.google.com/url?q=https://developers.google.com/search/docs/appearance/structured-data/intro-structured-data&sa=D&source=editors&ust=1778149227494163&usg=AOvVaw12qheGUDGixZXPKMM3-cUM

- https://www.google.com/url?q=https://developers.google.com/search/docs/appearance/structured-data/intro-structured-data&sa=D&source=editors&ust=1778149227494298&usg=AOvVaw2R_yTjywfKjrTZyAYE0LP0

- https://www.google.com/url?q=https://wremf.com/features/geo-audit&sa=D&source=editors&ust=1778149227500333&usg=AOvVaw1jEIz37kJtqm-lbR20hfUY

- https://www.google.com/url?q=https://wremf.com/features/geo-audit&sa=D&source=editors&ust=1778149227500449&usg=AOvVaw0B8bSBhyANY6618YELthdo

- https://www.google.com/url?q=https://wremf.com/features/content-briefs&sa=D&source=editors&ust=1778149227500731&usg=AOvVaw2aJKdyez_8SLDb8UIE0MHZ

- https://www.google.com/url?q=https://wremf.com/features/content-briefs&sa=D&source=editors&ust=1778149227500827&usg=AOvVaw3AypeYmYYW2jzPHwj0kLnY

- https://www.google.com/url?q=https://wremf.com/api&sa=D&source=editors&ust=1778149227504175&usg=AOvVaw2HGSrxTvPcqBdFu5F8ffng

- https://www.google.com/url?q=https://wremf.com/api&sa=D&source=editors&ust=1778149227504261&usg=AOvVaw2Jf7fp0ETDrN-DxgZHKeX4

- https://www.google.com/url?q=https://wremf.com/for/agencies&sa=D&source=editors&ust=1778149227510122&usg=AOvVaw3bOkDCWOfVxKX-46V98hkw

- https://www.google.com/url?q=https://wremf.com/for/agencies&sa=D&source=editors&ust=1778149227510277&usg=AOvVaw1BgzpKGfwMIkC5GAXCoNFt

- https://www.google.com/url?q=https://wremf.com/agency&sa=D&source=editors&ust=1778149227510400&usg=AOvVaw0qrliNyKTFcXUT211pLwgx

- https://www.google.com/url?q=https://wremf.com/agency&sa=D&source=editors&ust=1778149227510458&usg=AOvVaw32fJi7gRb7u4h3zmmAwkCZ

- https://www.google.com/url?q=https://wremf.com/pricing&sa=D&source=editors&ust=1778149227520591&usg=AOvVaw3mimsHXww0ycyx9lnFEqbX

- https://www.google.com/url?q=https://wremf.com/pricing&sa=D&source=editors&ust=1778149227520677&usg=AOvVaw22Z_D_rGlkqZLOx0E0K1I7

- https://www.google.com/url?q=https://platform.claude.com/docs/en/agents-and-tools/tool-use/web-search-tool&sa=D&source=editors&ust=1778149227522223&usg=AOvVaw2SWAVqhUQuL8mT8mm5aena

- https://www.google.com/url?q=https://platform.claude.com/docs/en/agents-and-tools/tool-use/web-search-tool&sa=D&source=editors&ust=1778149227522340&usg=AOvVaw05IWC117D3pst5Q-R5tlou

- https://www.google.com/url?q=https://wremf.com/suite/prompt-intelligence&sa=D&source=editors&ust=1778149227528552&usg=AOvVaw2U0dX6wWo5gXtCffzkmBPl

- https://www.google.com/url?q=https://wremf.com/suite/prompt-intelligence&sa=D&source=editors&ust=1778149227528638&usg=AOvVaw2899kx5OuRO1KBYWtiXwjA

- https://www.google.com/url?q=https://wremf.com/suite/source-citations&sa=D&source=editors&ust=1778149227528700&usg=AOvVaw0qyH3Ya6G8MtH3xkiCWoIu

- https://www.google.com/url?q=https://wremf.com/suite/source-citations&sa=D&source=editors&ust=1778149227528783&usg=AOvVaw2Eifw-W-Cpvy29JtAZeXSX

- https://www.google.com/url?q=https://wremf.com/suite/competitive-landscape&sa=D&source=editors&ust=1778149227528842&usg=AOvVaw0sKhdIYNdjZYbr6kWki1eB

- https://www.google.com/url?q=https://wremf.com/suite/competitive-landscape&sa=D&source=editors&ust=1778149227528925&usg=AOvVaw2zm9D3QcHIYclov9s1SpXE

- https://www.google.com/url?q=https://wremf.com/suite&sa=D&source=editors&ust=1778149227538847&usg=AOvVaw00vQdp7CmW-J2uKV-GGwmv

- https://www.google.com/url?q=https://wremf.com/suite&sa=D&source=editors&ust=1778149227538947&usg=AOvVaw1KMwewXlJSY9OnaV65tnzP

- https://www.google.com/url?q=https://wremf.com/agency&sa=D&source=editors&ust=1778149227539012&usg=AOvVaw0z0H5-fjJNzS9S9Med4f9d

- https://www.google.com/url?q=https://wremf.com/agency&sa=D&source=editors&ust=1778149227539070&usg=AOvVaw2EmK8tcOFbsmrLeLDw9HDV

Frequently Asked Questions

What are enterprise answer engine optimization platforms?

Enterprise answer engine optimization platforms are tools that help large organizations track, improve, and report how their brand appears in AI-generated answers. They measure AI visibility, prompt coverage, brand mentions, source citations, competitor visibility, Answer Share of Voice, and referral traffic from AI systems. These platforms are useful for SEO teams, content teams, agencies, product marketing teams, and growth leaders that need repeatable monitoring across ChatGPT, Claude, Gemini, Perplexity, Google AI Overviews, Copilot, and other answer engines.

Is answer engine optimization the same as SEO?

Answer engine optimization is not the same as SEO, but it depends on SEO foundations. SEO focuses on search engines, crawlability, indexation, Google rankings, organic clicks, backlinks, and technical SEO. Answer engine optimization focuses on answer readiness, AI responses, featured snippet style answers, brand mention frequency, source citations, structured data, trust signals, and entity clarity. The strongest enterprise strategy uses SEO, AEO, and Generative Engine Optimization together instead of treating them as separate programs.

What is the difference between AEO and Generative Engine Optimization?

AEO focuses on making content easier for answer engines to extract, summarize, and present as direct answers. Generative Engine Optimization focuses on improving visibility inside generative engines such as ChatGPT, Claude, Gemini, Perplexity, Copilot, and Google AI Overviews. In practice, AEO and Generative Engine Optimization overlap because both rely on answer-first structure, technical SEO, structured data, citation tracking, entity optimization, brand authority, and clear content strategy.

What features should an enterprise AEO platform have in 2026?

An enterprise AEO platform should include AI visibility tracking, prompt intelligence, citation tracking, competitor visibility, source consistency analysis, technical SEO diagnostics, content briefs, AI traffic attribution, scheduled monitoring, governance controls, reporting dashboards, and integrations. Enterprise teams should also look for BYOK support, API access, MCP workflows, white-label reporting, client portals, and agency execution options. The best platform depends on engine coverage, reporting needs, internal execution capacity, and the importance of managed support.

How do enterprise AEO platforms improve search results for businesses?

Enterprise AEO platforms improve search outcomes by showing where a brand is absent, misrepresented, weakly cited, or outranked by competitors inside AI answers. The platform identifies prompt gaps, source citation gaps, content gaps, technical SEO issues, schema implementation problems, and source consistency risks. The business can then improve answer-first content, strengthen brand entities, update third-party sources, build trust signals, and monitor whether AI responses and referral traffic change over time.

Do we need separate AEO content, or can we adapt existing pages?

Most enterprise teams can adapt existing pages first, then create separate AEO content only where gaps remain. A strong existing page can be improved with answer-first structure, clearer headings, featured snippet blocks, FAQs, structured data, internal links, entity relationships, author pages, and stronger source attribution. Separate content may be needed for comparison prompts, implementation prompts, local pages, glossary topics, product alternatives, or questions that existing pages do not answer directly.

Does structured data guarantee inclusion in answer engines?

Structured data does not guarantee inclusion in answer engines, AI Overviews, featured snippets, or AI citations. Structured data helps search engines understand page content and entities, but AI visibility also depends on content quality, crawlability, source authority, brand entities, trust signals, citation availability, and query relevance. Schema implementation should be treated as one technical SEO and entity clarity layer inside a broader answer engine optimization strategy.

Which industries benefit most from enterprise answer engine optimization platforms?

B2B SaaS, cybersecurity, fintech, healthcare technology, legal technology, HR software, ecommerce platforms, agencies, marketplaces, local networks, and professional services can benefit from enterprise answer engine optimization platforms. These industries often have complex buying journeys, high-value comparison searches, long sales cycles, and many competitor alternatives. AEO platforms are especially useful when buyers ask AI assistants for vendor shortlists, product comparisons, implementation risks, pricing context, and category recommendations.

What metrics should enterprises track to measure AEO success?

Enterprises should track AI visibility, brand mention frequency, prompt coverage, source citations, AI engine citation frequency, competitor mentions, Answer Share of Voice, source consistency, referral traffic, conversions, and completed recommendations. Traditional metrics such as Google rankings, organic clicks, backlinks, and technical SEO health should also remain part of reporting. The goal is to connect AI search visibility with content action, competitor movement, and business outcomes without claiming guaranteed traffic or revenue.

How should agencies approach answer engine optimization for clients?

Agencies should approach answer engine optimization with a repeatable audit, measurement, execution, and reporting workflow. The agency should define prompt sets, capture AI visibility baselines, identify cited sources, compare competitors, audit technical SEO, create answer-first content briefs, fix source consistency, and report changes monthly. Agencies also need white-label reporting, client portals, clear deliverables, and governance. WREMF supports agencies with multi-client workflows and reporting for AI visibility programs.

Is WREMF an AEO platform, an agency service, or both?

WREMF can be used as software, an agency service, or a combined software plus managed execution solution. The platform helps teams track AI visibility, prompt intelligence, source citations, competitor visibility, AI share of voice, AI traffic attribution, GEO audits, content briefs, SEO testing, and reporting. For teams that need implementation support, WREMF also offers managed AEO, GEO, content optimisation, source consistency cleanup, and technical AI visibility services.

How can enterprises troubleshoot common AEO platform issues?

Enterprises can troubleshoot AEO platform issues by checking prompt quality, engine coverage, source freshness, competitor definitions, crawlability, schema validation, analytics tagging, and reporting cadence. If AI visibility data looks inconsistent, review whether prompts are too broad, too few engines are tracked, or AI responses are being compared without stable criteria. If recommendations are not improving results, check whether content, technical SEO, Digital PR, and source consistency actions are actually being implemented.

Reviewed by

Rohan Singh

Related articles

- How AI Search Optimization Tools Increase Organic Traffic

- How Do AI Search Optimization Tools Improve SERP Rankings?

- 12 Best AI Search Optimization Tools

- AI Search Engine Optimization: The Complete Guide for B2B Brands

- AI Search Engine Optimization Tools: The Complete 2026 Guide for AI Search, SEO, AEO, and GEO

- AI SEO Tools: The Complete Guide for SEO, AEO, GEO, and AI Search Visibility

Cite this article

"Enterprise Answer Engine Optimization Platforms: Complete Guide for AI Visibility, AEO, and GEO" by WREMF Team, WREMF (2026). https://wremf.com/blog/enterprise-answer-engine-optimization-platforms-complete-guide-for-ai-visibility-aeo-and-geo